Think about you’re scrolling by means of the images in your cellphone and also you come throughout a picture that initially you may’t acknowledge. It appears to be like like possibly one thing fuzzy on the sofa; may it’s a pillow or a coat? After a few seconds it clicks — after all! That ball of fluff is your good friend’s cat, Mocha. Whereas a few of your images may very well be understood right away, why was this cat picture way more troublesome?

MIT Laptop Science and Synthetic Intelligence Laboratory (CSAIL) researchers have been shocked to seek out that regardless of the crucial significance of understanding visible knowledge in pivotal areas starting from well being care to transportation to family gadgets, the notion of a picture’s recognition problem for people has been nearly fully ignored. One of many main drivers of progress in deep learning-based AI has been datasets, but we all know little about how knowledge drives progress in large-scale deep studying past that greater is best.

In real-world purposes that require understanding visible knowledge, people outperform object recognition fashions although fashions carry out effectively on present datasets, together with these explicitly designed to problem machines with debiased pictures or distribution shifts. This downside persists, partially, as a result of we now have no steerage on absolutely the problem of a picture or dataset. With out controlling for the issue of pictures used for analysis, it’s arduous to objectively assess progress towards human-level efficiency, to cowl the vary of human skills, and to extend the problem posed by a dataset.

To fill on this data hole, David Mayo, an MIT PhD pupil in electrical engineering and pc science and a CSAIL affiliate, delved into the deep world of picture datasets, exploring why sure pictures are harder for people and machines to acknowledge than others. “Some pictures inherently take longer to acknowledge, and it is important to grasp the mind’s exercise throughout this course of and its relation to machine studying fashions. Maybe there are advanced neural circuits or distinctive mechanisms lacking in our present fashions, seen solely when examined with difficult visible stimuli. This exploration is essential for comprehending and enhancing machine imaginative and prescient fashions,” says Mayo, a lead writer of a brand new paper on the work.

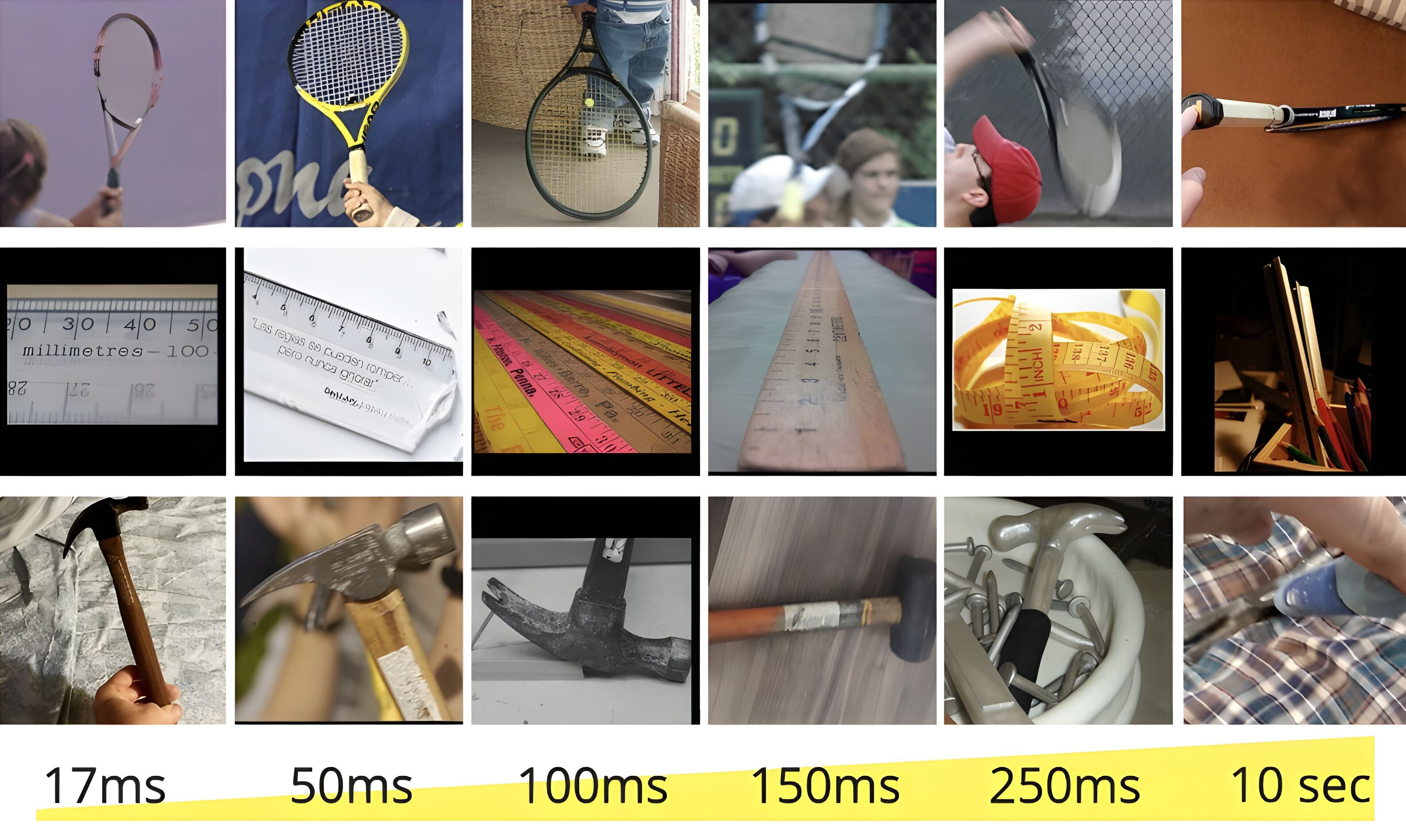

This led to the event of a brand new metric, the “minimal viewing time” (MVT), which quantifies the issue of recognizing a picture based mostly on how lengthy an individual must view it earlier than making an accurate identification. Utilizing a subset of ImageNet, a preferred dataset in machine studying, and ObjectNet, a dataset designed to check object recognition robustness, the staff confirmed pictures to individuals for various durations from as quick as 17 milliseconds to so long as 10 seconds, and requested them to decide on the proper object from a set of fifty choices. After over 200,000 picture presentation trials, the staff discovered that present check units, together with ObjectNet, appeared skewed towards simpler, shorter MVT pictures, with the overwhelming majority of benchmark efficiency derived from pictures which are simple for people.

The mission recognized fascinating traits in mannequin efficiency — significantly in relation to scaling. Bigger fashions confirmed appreciable enchancment on easier pictures however made much less progress on more difficult pictures. The CLIP fashions, which incorporate each language and imaginative and prescient, stood out as they moved within the path of extra human-like recognition.

“Historically, object recognition datasets have been skewed in the direction of less-complex pictures, a apply that has led to an inflation in mannequin efficiency metrics, not really reflective of a mannequin’s robustness or its potential to deal with advanced visible duties. Our analysis reveals that tougher pictures pose a extra acute problem, inflicting a distribution shift that’s usually not accounted for in commonplace evaluations,” says Mayo. “We launched picture units tagged by problem together with instruments to robotically compute MVT, enabling MVT to be added to present benchmarks and prolonged to numerous purposes. These embody measuring check set problem earlier than deploying real-world methods, discovering neural correlates of picture problem, and advancing object recognition methods to shut the hole between benchmark and real-world efficiency.”

“Considered one of my greatest takeaways is that we now have one other dimension to guage fashions on. We would like fashions which are capable of acknowledge any picture even when — maybe particularly if — it’s arduous for a human to acknowledge. We’re the primary to quantify what this could imply. Our outcomes present that not solely is that this not the case with as we speak’s cutting-edge, but additionally that our present analysis strategies don’t have the flexibility to inform us when it’s the case as a result of commonplace datasets are so skewed towards simple pictures,” says Jesse Cummings, an MIT graduate pupil in electrical engineering and pc science and co-first writer with Mayo on the paper.

From ObjectNet to MVT

A number of years in the past, the staff behind this mission recognized a major problem within the discipline of machine studying: Fashions have been fighting out-of-distribution pictures, or pictures that weren’t well-represented within the coaching knowledge. Enter ObjectNet, a dataset comprised of pictures collected from real-life settings. The dataset helped illuminate the efficiency hole between machine studying fashions and human recognition skills, by eliminating spurious correlations current in different benchmarks — for instance, between an object and its background. ObjectNet illuminated the hole between the efficiency of machine imaginative and prescient fashions on datasets and in real-world purposes, encouraging use for a lot of researchers and builders — which subsequently improved mannequin efficiency.

Quick ahead to the current, and the staff has taken their analysis a step additional with MVT. In contrast to conventional strategies that target absolute efficiency, this new method assesses how fashions carry out by contrasting their responses to the best and hardest pictures. The research additional explored how picture problem may very well be defined and examined for similarity to human visible processing. Utilizing metrics like c-score, prediction depth, and adversarial robustness, the staff discovered that tougher pictures are processed otherwise by networks. “Whereas there are observable traits, similar to simpler pictures being extra prototypical, a complete semantic clarification of picture problem continues to elude the scientific neighborhood,” says Mayo.

Within the realm of well being care, for instance, the pertinence of understanding visible complexity turns into much more pronounced. The flexibility of AI fashions to interpret medical pictures, similar to X-rays, is topic to the range and problem distribution of the photographs. The researchers advocate for a meticulous evaluation of problem distribution tailor-made for professionals, making certain AI methods are evaluated based mostly on knowledgeable requirements, relatively than layperson interpretations.

Mayo and Cummings are at the moment taking a look at neurological underpinnings of visible recognition as effectively, probing into whether or not the mind displays differential exercise when processing simple versus difficult pictures. The research goals to unravel whether or not advanced pictures recruit extra mind areas not sometimes related to visible processing, hopefully serving to demystify how our brains precisely and effectively decode the visible world.

Towards human-level efficiency

Trying forward, the researchers usually are not solely centered on exploring methods to reinforce AI’s predictive capabilities concerning picture problem. The staff is engaged on figuring out correlations with viewing-time problem to be able to generate tougher or simpler variations of pictures.

Regardless of the research’s important strides, the researchers acknowledge limitations, significantly when it comes to the separation of object recognition from visible search duties. The present methodology does consider recognizing objects, leaving out the complexities launched by cluttered pictures.

“This complete method addresses the long-standing problem of objectively assessing progress in the direction of human-level efficiency in object recognition and opens new avenues for understanding and advancing the sphere,” says Mayo. “With the potential to adapt the Minimal Viewing Time problem metric for a wide range of visible duties, this work paves the best way for extra sturdy, human-like efficiency in object recognition, making certain that fashions are really put to the check and are prepared for the complexities of real-world visible understanding.”

“It is a fascinating research of how human notion can be utilized to determine weaknesses within the methods AI imaginative and prescient fashions are sometimes benchmarked, which overestimate AI efficiency by concentrating on simple pictures,” says Alan L. Yuille, Bloomberg Distinguished Professor of Cognitive Science and Laptop Science at Johns Hopkins College, who was not concerned within the paper. “This may assist develop extra real looking benchmarks main not solely to enhancements to AI but additionally make fairer comparisons between AI and human notion.”

“It is broadly claimed that pc imaginative and prescient methods now outperform people, and on some benchmark datasets, that is true,” says Anthropic technical employees member Simon Kornblith PhD ’17, who was additionally not concerned on this work. “Nonetheless, a whole lot of the issue in these benchmarks comes from the obscurity of what is within the pictures; the typical individual simply does not know sufficient to categorise totally different breeds of canines. This work as an alternative focuses on pictures that individuals can solely get proper if given sufficient time. These pictures are usually a lot tougher for pc imaginative and prescient methods, however the very best methods are solely a bit worse than people.”

Mayo, Cummings, and Xinyu Lin MEng ’22 wrote the paper alongside CSAIL Analysis Scientist Andrei Barbu, CSAIL Principal Analysis Scientist Boris Katz, and MIT-IBM Watson AI Lab Principal Researcher Dan Gutfreund. The researchers are associates of the MIT Middle for Brains, Minds, and Machines.

The staff is presenting their work on the 2023 Convention on Neural Data Processing Programs (NeurIPS).