At Microsoft we wish to empower our clients to harness the total potential of recent applied sciences like synthetic intelligence, whereas assembly their privateness wants and expectations. At present we’re sharing key elements of how our strategy to defending privateness in AI – together with our deal with safety, transparency, consumer management, and continued compliance with knowledge safety necessities – are core elements of our new generative AI merchandise like Microsoft Copilot.

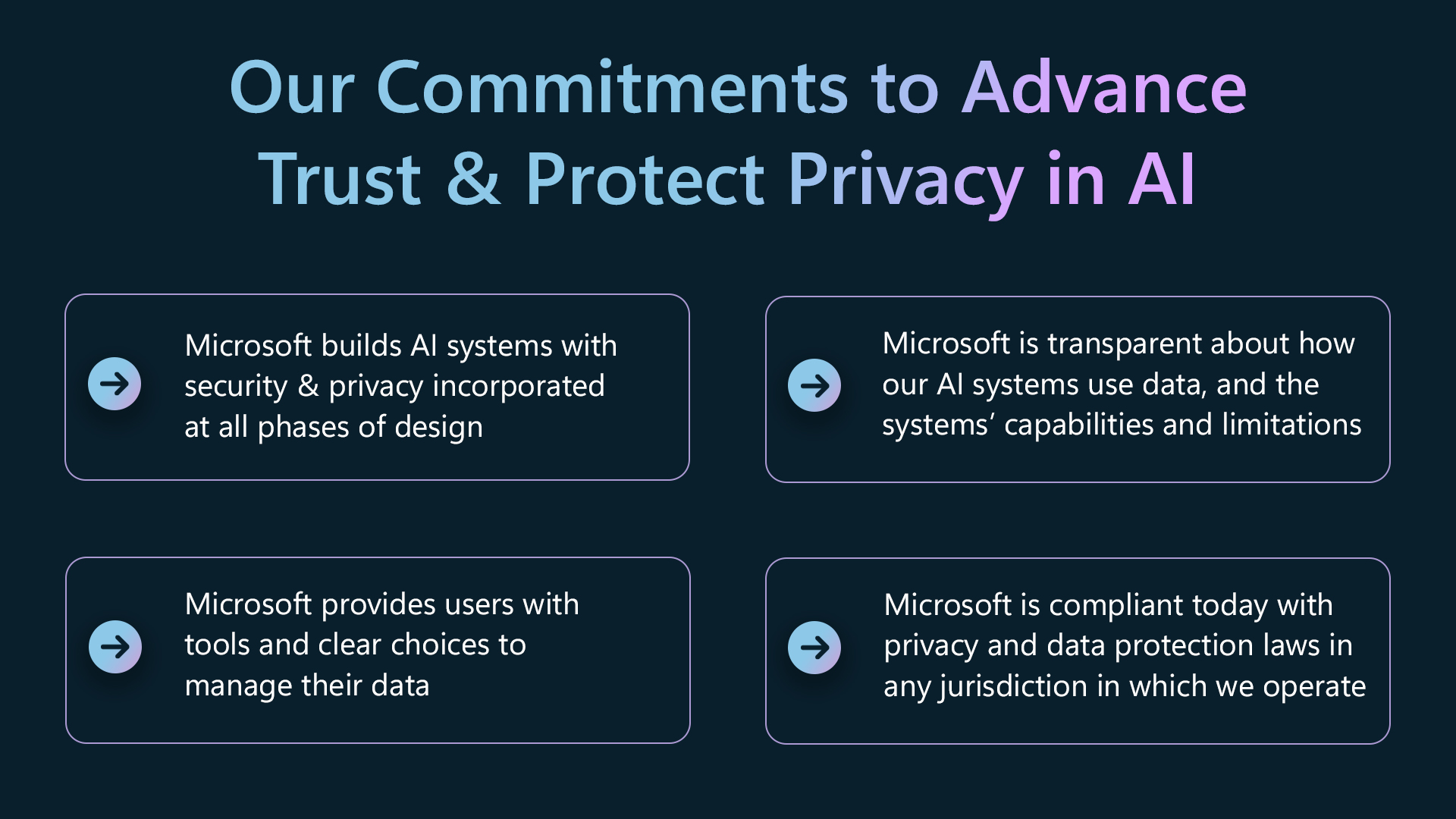

We create our merchandise with safety and privateness integrated via all phases of design and implementation. We offer transparency to allow individuals and organizations to grasp the capabilities and limitations of our AI techniques, and the sources of data that generate the responses they obtain, by offering data in real-time as customers have interaction with our AI merchandise. We offer instruments and clear decisions so individuals can management their knowledge, together with via instruments to entry, handle, and delete private knowledge and saved dialog historical past.

Our strategy to privateness in AI techniques is grounded in our longstanding perception that privateness is a elementary human proper. We’re dedicated to continued compliance with all relevant legal guidelines, together with privateness and knowledge safety laws, and we help accelerating the growth of acceptable guardrails to construct belief in AI techniques.

We consider the strategy we’ve taken to boost privateness in our AI expertise will assist present readability to individuals about how they’ll management and shield their knowledge in our new generative AI merchandise.

Our strategy

Knowledge safety is core to privateness

Holding knowledge safe is a vital privateness precept at Microsoft and is important to making sure belief in AI techniques. Microsoft implements acceptable technical and organizational measures to make sure knowledge is safe and guarded in our AI techniques.

Microsoft has built-in Copilot into many alternative companies together with Microsoft 365, Dynamics 365, Viva Gross sales, and Energy Platform: every product is created and deployed with important safety, compliance, and privateness insurance policies and processes. Our safety and privateness groups make use of each privateness and safety by design all through the event and deployment of all our merchandise. We make use of a number of layers of protecting measures to maintain knowledge safe in our AI merchandise like Microsoft Copilot, together with technical controls like encryption, all of which play an important function within the knowledge safety of our AI techniques. Holding knowledge protected and safe in AI techniques – and making certain that the techniques are architected to respect knowledge entry and dealing with insurance policies – are central to our strategy. Safety and privateness are rules which are constructed into our inner Accountable AI normal and we’re dedicated to persevering with to deal with privateness and safety to maintain our AI merchandise secure and reliable.

Transparency

Transparency is one other key precept for integrating AI into Microsoft services in a means that promotes consumer management and privateness, and builds belief. That’s why we’re dedicated to constructing transparency into individuals’s interactions with our AI techniques. This strategy to transparency begins with offering readability to customers when they’re interacting with an AI system if there’s danger that they are going to be confused. And we offer real-time data to assist individuals higher perceive how AI options work.

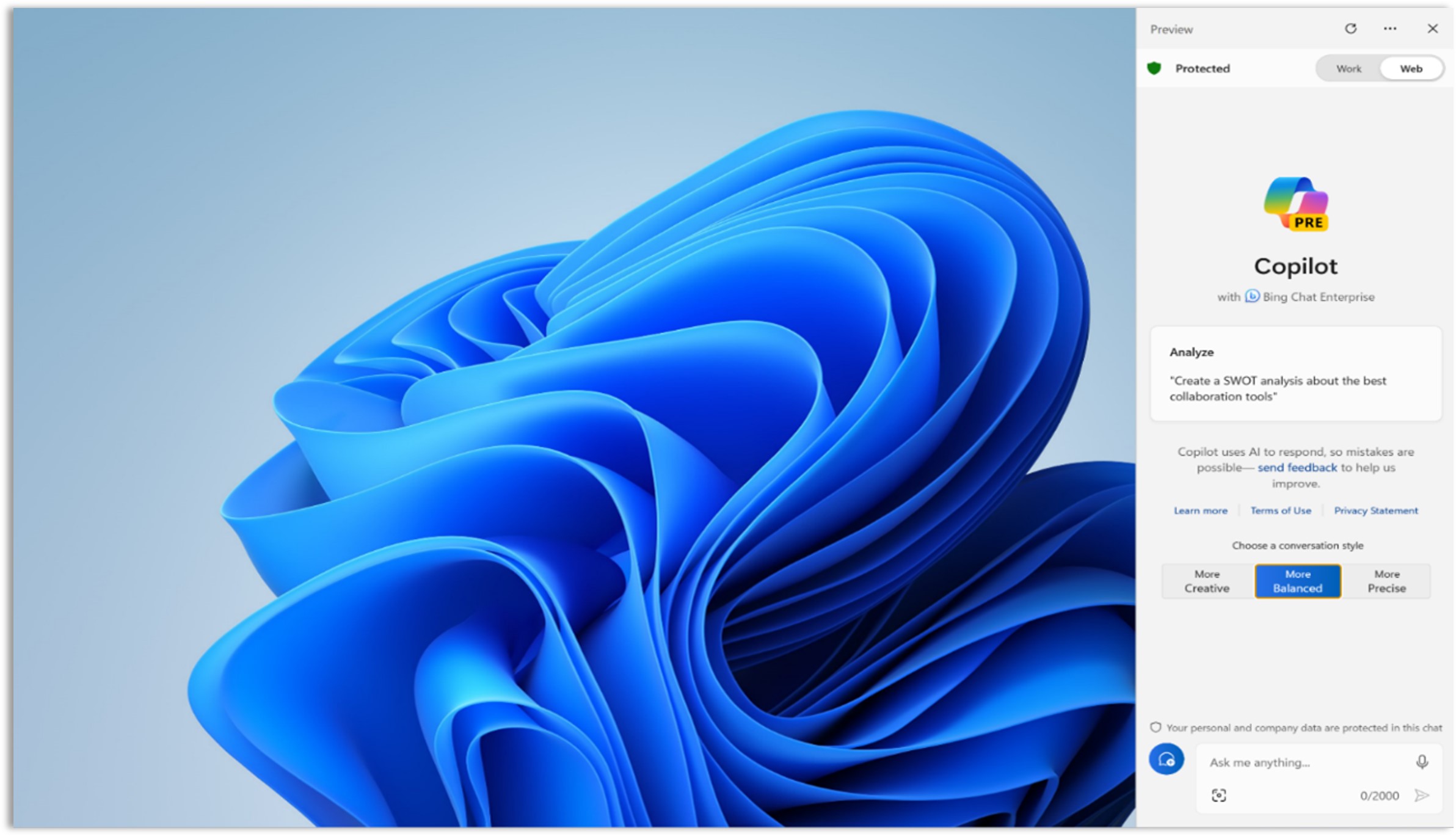

Microsoft Copilot makes use of quite a lot of transparency approaches that meet customers the place they’re. Copilot gives clear details about the way it collects and makes use of knowledge, in addition to its capabilities and its limitations. Our strategy to transparency additionally helps individuals perceive how they’ll greatest leverage the capabilities of Copilot as an on a regular basis AI instrument and gives alternatives to be taught extra and supply suggestions.

Clear decisions and disclosures whereas customers have interaction with Microsoft Copilot

To assist individuals perceive the capabilities of those new AI instruments, Copilot gives in-product data that clearly lets customers know that they’re interacting with AI and gives easy-to-understand decisions in a conversational type. As individuals work together, these disclosures and decisions assist present a greater understanding of harness the advantages of AI and restrict potential dangers.

Grounding responses in proof and sources

Copilot additionally gives details about how its responses are centered, or “grounded”, on related content material. In our AI choices in Bing, Copilot.microsoft.com, Microsoft Edge, and Home windows, our Copilot responses embrace details about the content material from the net that helped generate the response. In Copilot for Microsoft 365, responses may embrace details about the consumer’s enterprise knowledge included in a generated response, comparable to emails or paperwork that you have already got permission to entry. By sharing hyperlinks to enter sources and supply supplies, individuals have higher management of their AI expertise and may higher consider the credibility and relevance of Microsoft Copilot outputs, and entry extra data as wanted.

Knowledge safety consumer controls

Microsoft gives instruments that put individuals in command of their knowledge. We consider all organizations providing AI expertise ought to guarantee shoppers can meaningfully train their knowledge topic rights.

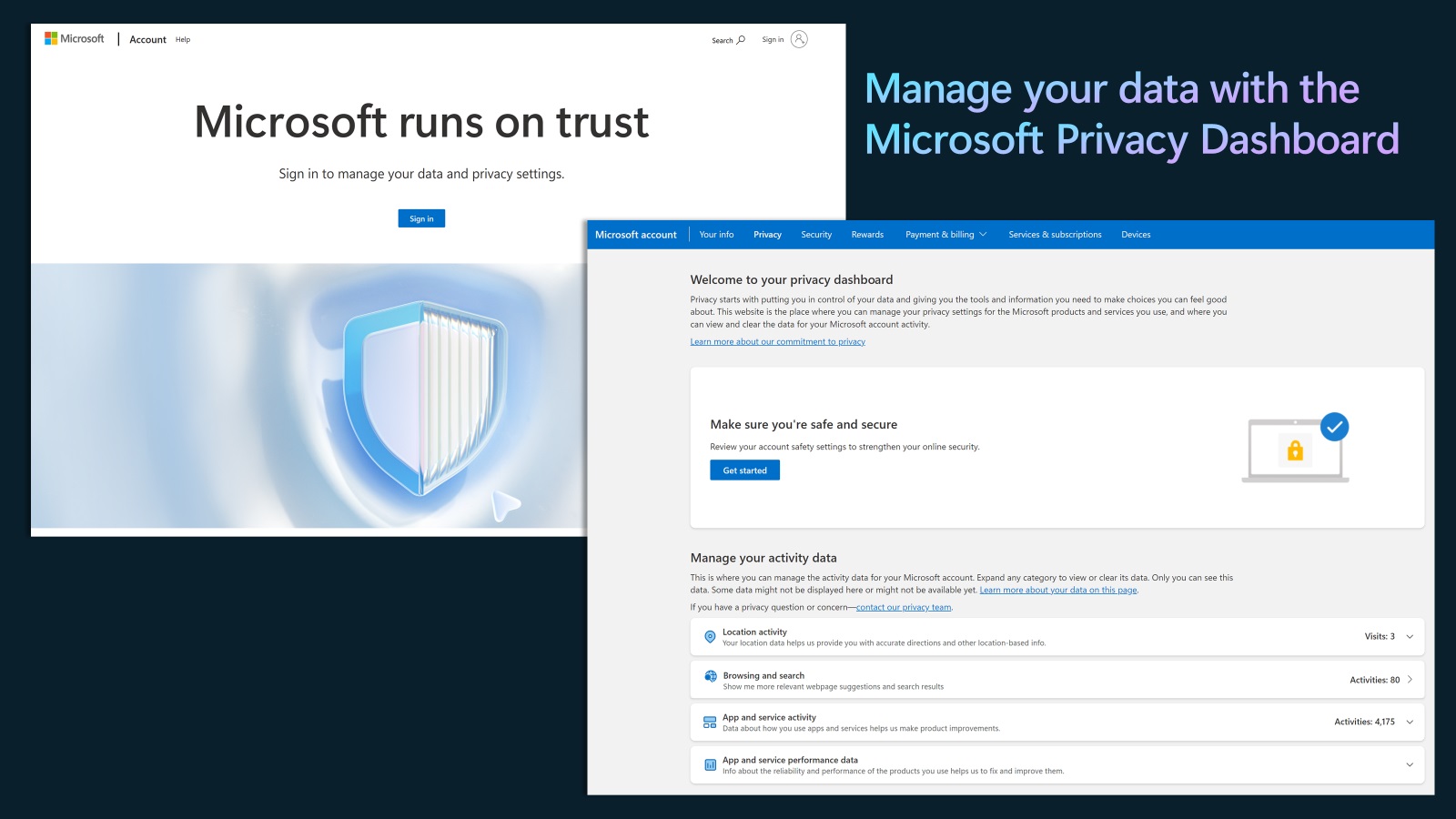

Microsoft gives the power to manage your interactions with Microsoft services and honors your privateness decisions. By the Microsoft Privateness Dashboard, our account holders can entry, handle, and delete their private knowledge and saved dialog historical past. In Microsoft Copilot, we honor further privateness decisions that our customers have made in our cookie banners and different controls, together with decisions about knowledge assortment and use.

The Microsoft Privateness Dashboard permits customers to entry, handle and delete their knowledge when signed into their Microsoft Account

Extra transparency about our privateness practices

Microsoft gives deeper details about how we shield people’ privateness in Microsoft Copilot and our different AI merchandise in our transparency supplies comparable to M365 Copilot FAQs and The New Bing: Our Method to Accountable AI, that are publicly obtainable on-line. These transparency supplies describe in higher element how our AI merchandise are designed, examined, and deployed – and the way our AI merchandise tackle moral and social points, comparable to equity, privateness, safety, and accountability. Our customers and the general public may overview the Microsoft Privateness Assertion which gives details about our privateness practices and controls for all of Microsoft’s client merchandise.

AI techniques are new and complicated, and we’re nonetheless studying how we are able to greatest inform our customers about our groundbreaking new AI instruments in a significant means. We proceed to hear and incorporate suggestions to make sure we offer clear details about how Microsoft Copilot works.

Complying with present legal guidelines, and supporting developments in international knowledge safety regulation

Microsoft is compliant as we speak with knowledge safety legal guidelines in all jurisdictions the place we function. We are going to proceed to work carefully with governments world wide to make sure we keep compliant, at the same time as authorized necessities develop and alter.

Corporations that develop AI techniques have an essential function to play in working with privateness and knowledge safety regulators world wide to assist them perceive how AI expertise is evolving. We have interaction with regulators to share details about how our AI techniques work, how they shield private knowledge, the teachings we’ve realized as we’ve developed privateness, safety and accountable AI governance techniques, and our concepts about tackle distinctive points round AI and privateness.

Regulatory approaches to AI are advancing within the European Union via its AI Act, and in america via the President’s Govt Order. We count on further regulators across the globe will search to handle the alternatives and the challenges that new AI applied sciences will carry to privateness and different elementary rights. Microsoft’s contribution to this international regulatory dialogue consists of our Blueprint for Governing AI, the place we make ideas in regards to the number of approaches and controls governments might wish to contemplate to guard privateness, advance elementary rights, and guarantee AI techniques are secure. We are going to proceed to work carefully with knowledge safety authorities and privateness regulators world wide as they develop their approaches.

As society strikes ahead on this period of AI, we’ll want privateness leaders inside authorities, organizations, civil society, and academia to work collectively to advance harmonized laws that guarantee AI improvements profit everybody and are centered on defending privateness and different elementary human rights.

At Microsoft, we’re dedicated to doing our half.