As described in our current weblog publish, an SQL AI Assistant has been built-in into Hue with the aptitude to leverage the facility of enormous language fashions (LLMs) for plenty of SQL duties. It will probably allow you to to create, edit, optimize, repair, and succinctly summarize queries utilizing pure language. This can be a actual game-changer for knowledge analysts on all ranges and can make SQL growth quicker, simpler, and fewer error-prone.

This weblog publish goals that will help you perceive what you are able to do to get began with generative AI assisted SQL utilizing Hue picture model 2023.0.16.0 or larger on the general public cloud. Each Hive and Impala dialects are supported. Please seek advice from the product documentation for extra details about particular releases.

Getting began with the SQL AI Assistant

Later on this weblog we’ll stroll you thru the steps of the right way to configure your Cloudera setting to make use of the SQL AI Assistant along with your supported LLM of selection. However first, let’s discover what the SQL AI Assistant does, and the way individuals would use it inside the SQL editor.

Utilizing the SQL AI Assistant

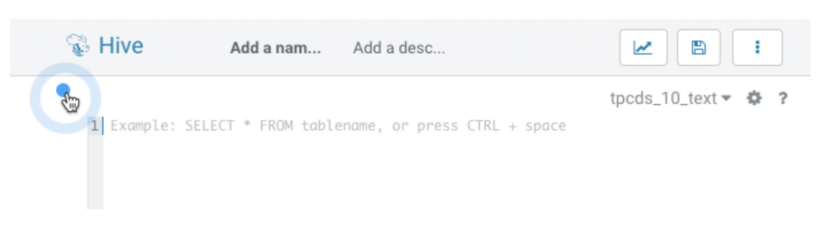

To launch the SQL AI Assistant, begin the SQL editor in Hue and click on the blue dot as proven within the following picture. This can develop the SQL AI toolbar with buttons to generate, edit, clarify, optimize and repair SQL statements. The assistant will use the identical database because the editor, which within the picture beneath is ready to a DB named tpcds_10_text.

The toolbar is context conscious and totally different actions can be enabled relying on what you’re doing within the editor. When the editor is empty, the one possibility out there is to generate new SQL from pure language.

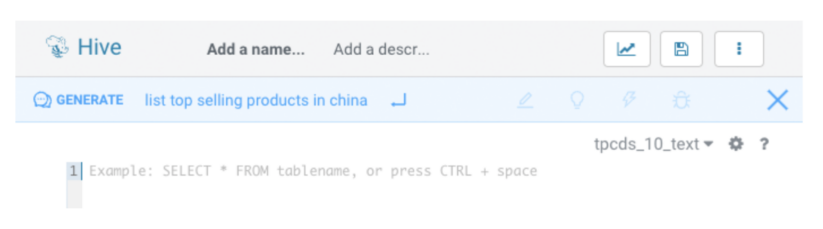

Click on “generate” and sort your question in pure language. Within the edit area, press the down arrow to see a historical past of question prompts. Click on “enter” to generate the SQL question.

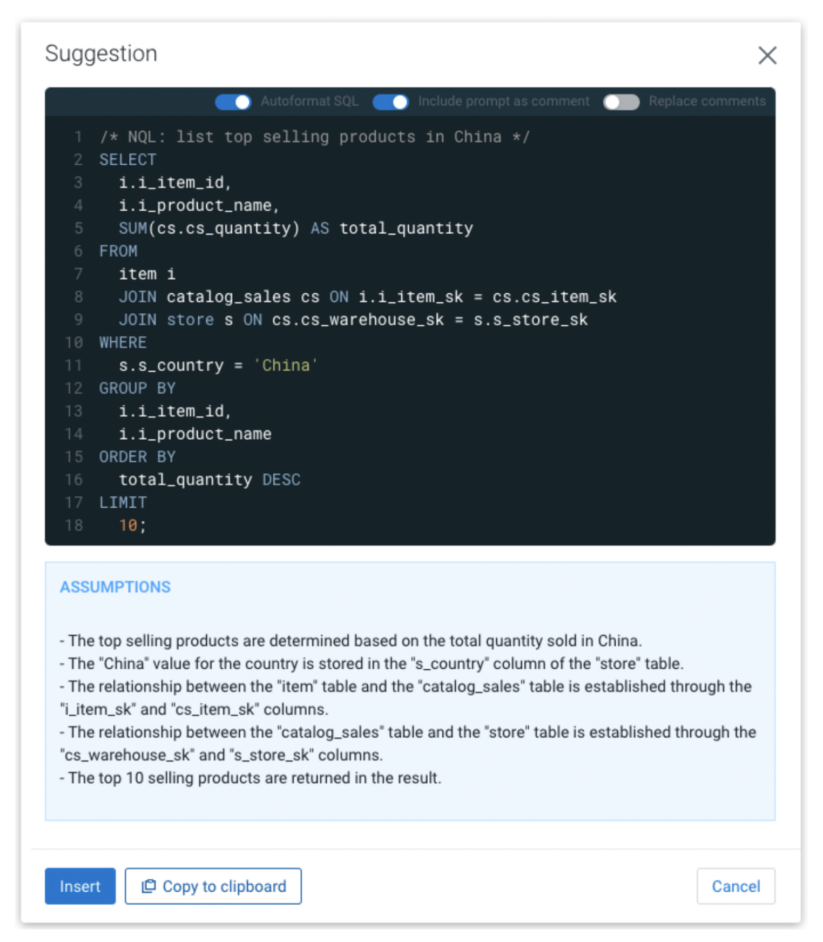

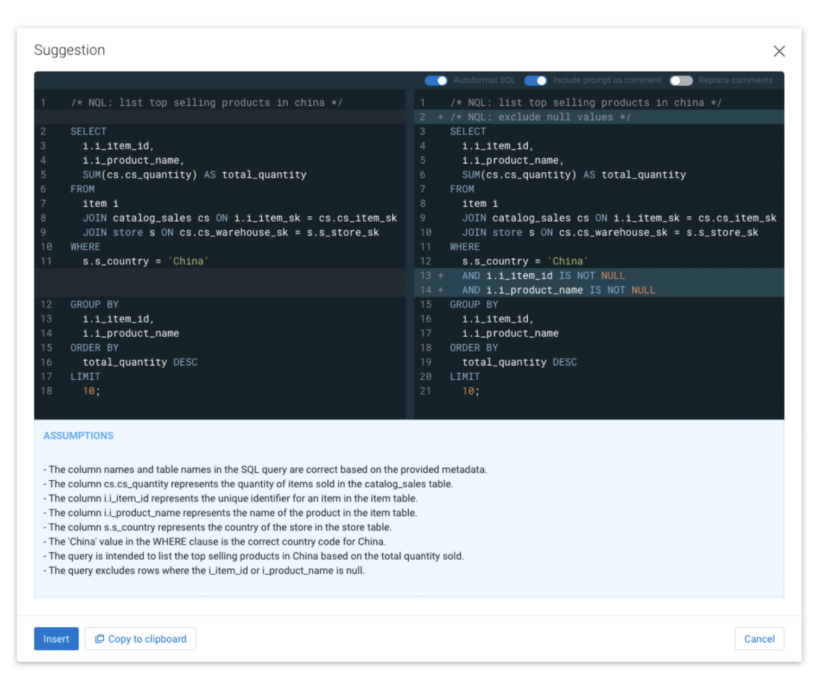

The generated SQL is introduced in a modal along with the assumptions made by the LLM. This will embrace assumptions concerning the intent of the pure language used, just like the definition of “high promoting merchandise,” values of wanted literals, and the way joins will be created. Now, you possibly can insert the SQL straight into the editor or copy it to the clipboard.

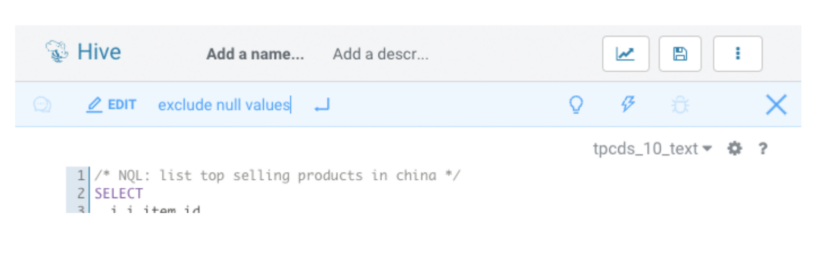

When there may be an energetic SQL assertion within the editor the SQL AI Assistant will allow the “edit,” “clarify,” and “optimize” buttons. The “repair” button will solely be enabled when the editor finds an error, akin to a SQL syntax error or a misspelled identify.

Click on “edit” to switch the energetic SQL assertion. If the assertion is preceded by a NQL-comment then that immediate will be reused by urgent tab. You can too simply begin typing a brand new instruction.

After utilizing edit, optimize, or repair, a preview reveals the unique question and the modified question variations. If the unique question has a distinct formatting or key phrase higher/decrease case than the generated question, you possibly can allow “Autoformat SQL” on the high of the modal for a greater outcome.

Click on “insert” to switch the unique question with the modified one within the editor.

The optimize and the repair performance don’t want person enter. To make use of them merely choose a SQL assertion within the editor, and click on “optimize” or “repair” to generate an improved model displayed as a diff of the unique question, as proven above. “Optimize” will attempt to enhance the construction and efficiency with out impacting the returned results of working the question. “Repair” will attempt to robotically repair syntactic errors and misspelling.

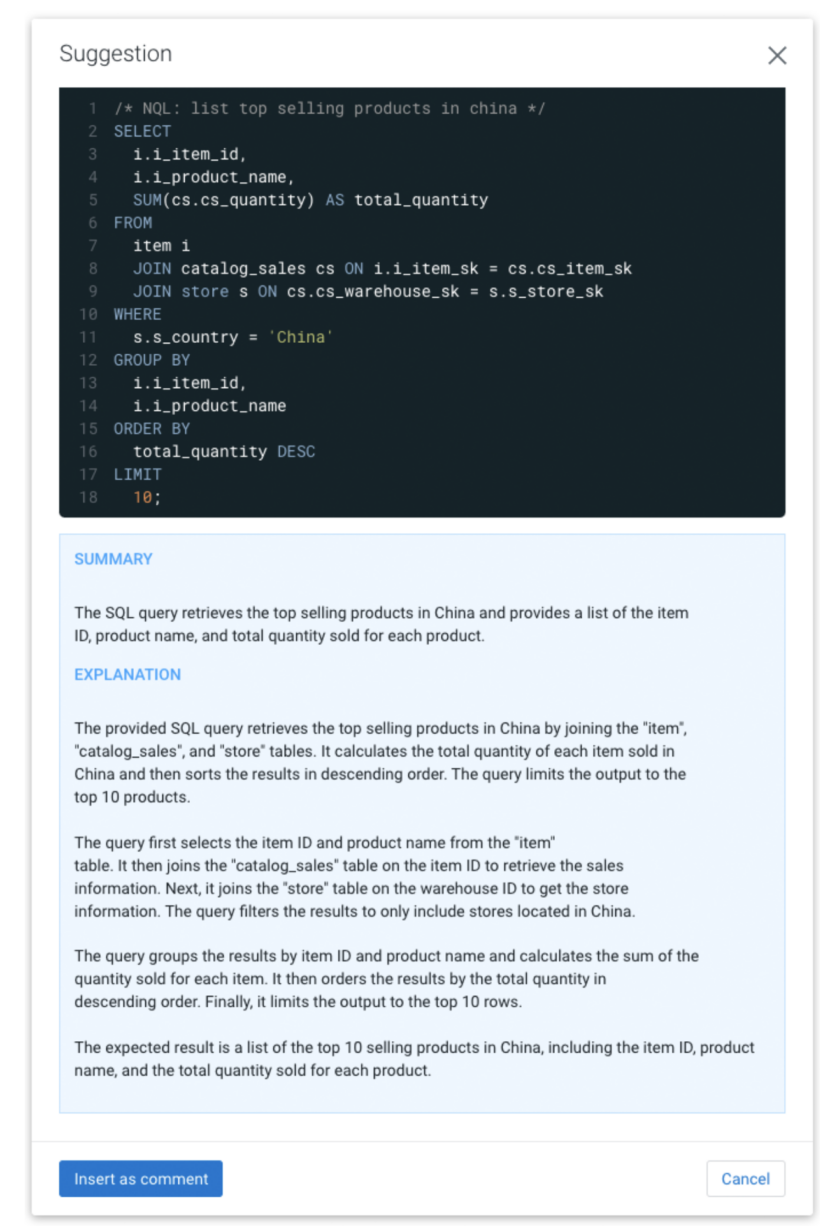

In the event you need assistance making sense of complicated SQL then merely choose the assertion, and click on “clarify.” A abstract and rationalization of the SQL in pure language will seem. You possibly can select to insert the textual content as a remark above the SQL assertion within the editor as proven beneath.

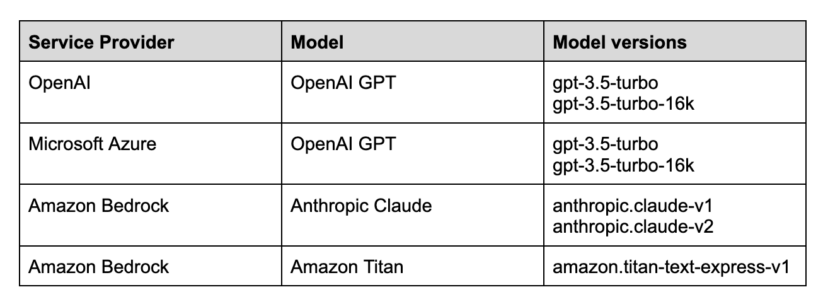

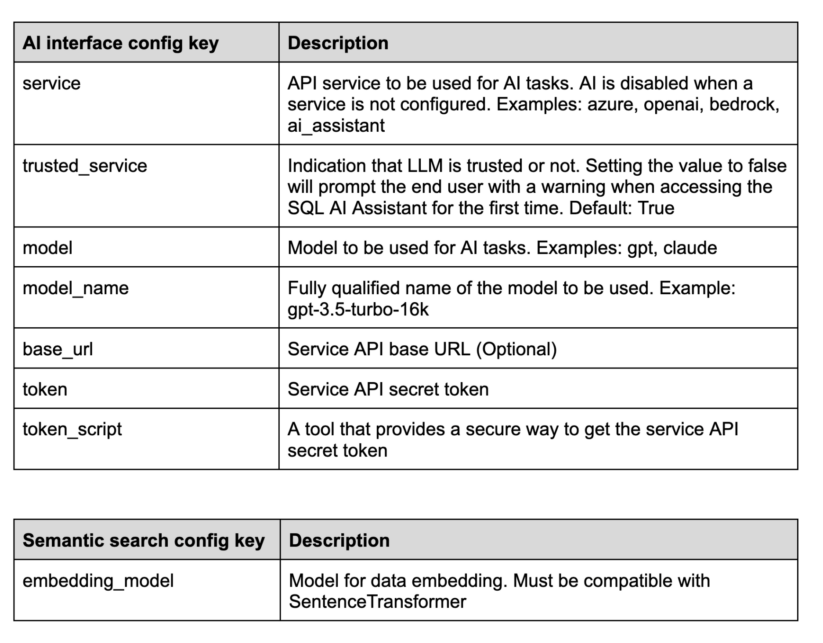

The SQL AI Assistant shouldn’t be bundled with a particular LLM; as an alternative it helps varied LLMs and internet hosting companies. The mannequin can run domestically, be hosted on CML infra or within the infrastructure of a trusted service supplier. Cloudera has been testing with GPT working in each Azure and OpenAI, however the next service-model mixtures are additionally supported:

Observe: Cloudera recommends utilizing the Hue AI assistant with the Azure OpenAI service.

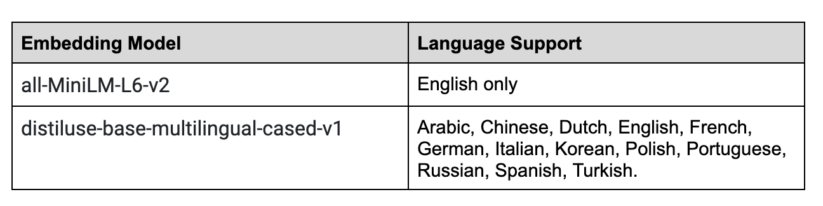

The supported AI fashions are pre-trained on pure language and SQL however they don’t have any data of your group’s knowledge. To beat this the SQL AI Assistant makes use of a Retrieval Augmented Era (RAG)-based structure the place the suitable info is retrieved for every particular person SQL process (immediate) and used to reinforce the request to the LLM. In the course of the retrieval course of it makes use of the Python SentenceTransformers framework for semantic search, which by default makes use of the all-MiniLM-L6-v2 mannequin. The SQL AI Assistant will be configured with many pre-trained fashions for higher multi-lingual help. Beneath are the fashions examined by Cloudera:

It is very important perceive that by utilizing the SQL AI Assistant you’re sending your personal prompts and in addition vital extra info as enter to the LLM. The SQL AI Assistant will solely share knowledge that the presently logged-in person is allowed to entry, however it’s of utmost significance that you simply use a service that you could belief along with your knowledge. The RAG-based structure reduces the variety of tables despatched per request to a brief record of the almost certainly wanted, however there may be presently no method to explicitly exclude sure tables; consequently, data about all tables that the logged-in person can entry within the database may very well be shared. The record beneath particulars precisely what’s shared:

- All the things {that a} person inputs within the SQL AI Assistant

- The chosen SQL assertion (if any) within the Hue editor

- SQL dialect in use (Hive, Impala for instance)

- Desk particulars akin to desk identify, column names, column knowledge sorts and associated keys, partitions and constraints

- Three pattern rows from the tables (following the most effective practices laid out in Rajkumar et al, 2022)

The administrator should receive clearance out of your group’s infosec group to verify it’s protected to make use of the SQL AI Assistant as a result of among the desk metadata and knowledge, as talked about within the earlier part, is shared with the LLM.

Getting began with the SQL AI Assistant is a simple course of. First prepare entry to one of many supported companies after which add the service particulars in Hue’s configuration.

Utilizing Microsoft Azure OpenAI service

Microsoft Azure supplies the choice to have devoted deployments of OpenAI GPT fashions. Azure’s OpenAI service is far more safe than the publicly hosted OpenAI APIs as a result of the information will be processed in your digital personal cloud (VPC). Contemplating the added safety, Azure’s OpenAI is the advisable service to make use of for GPT fashions within the SQL AI Assistant. For extra info, see the Azure OpenAI fast begin information.

Step 1. Azure subscription

First, get Azure entry. Contact your IT division to get an Azure subscription. Subscriptions may very well be totally different based mostly in your group and function. For extra info, see subscription issues.

2. Azure Open AI entry

Presently, entry to this service is granted solely by software. You possibly can apply for entry to Azure OpenAI by finishing the shape at https://aka.ms/oai/entry. As soon as accredited, it is best to obtain a welcome electronic mail.

3. Create useful resource

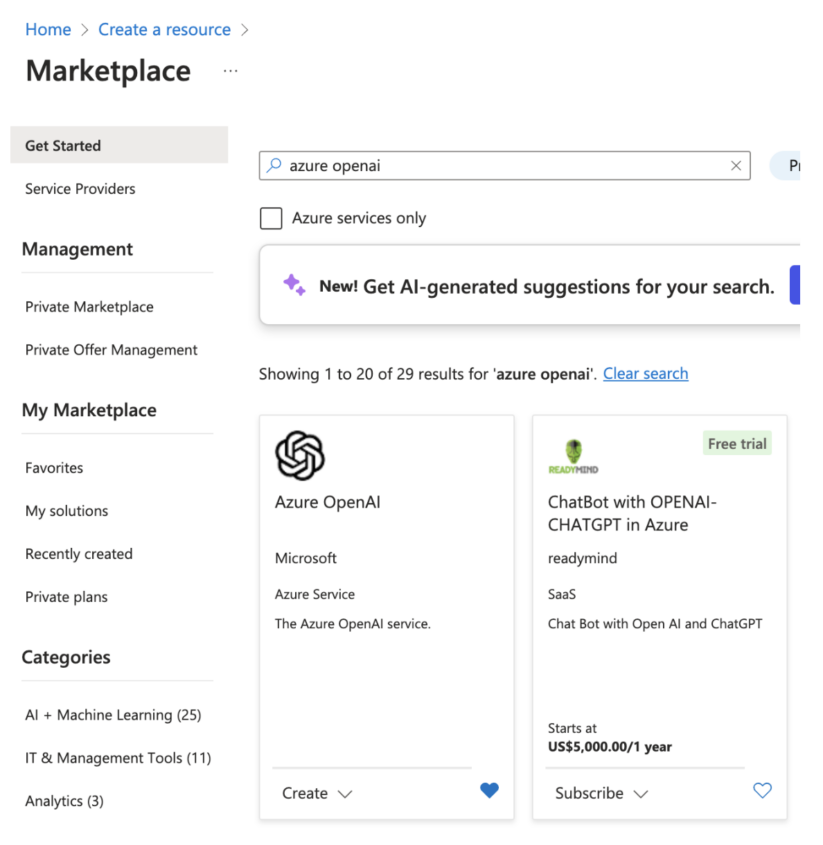

Within the Azure portal, create your Azure OpenAI useful resource: https://portal.azure.com/#residence.

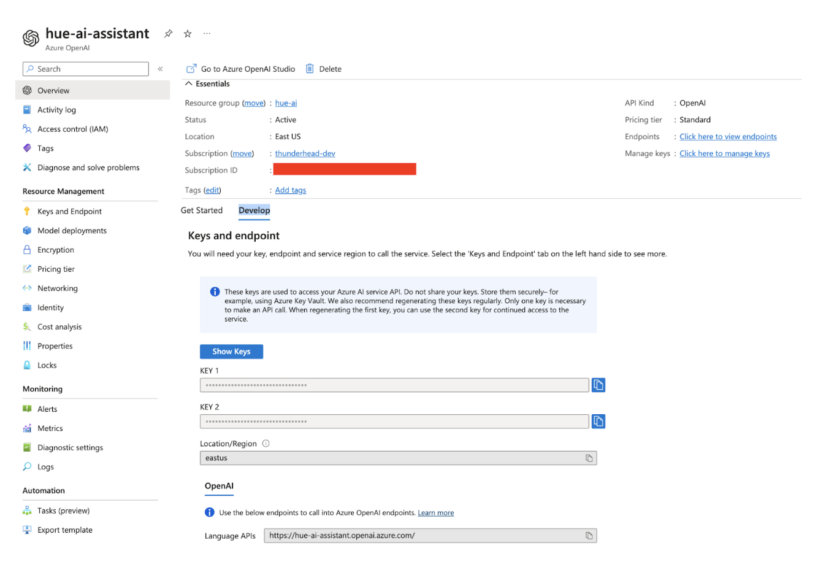

Within the useful resource particulars web page, below “Develop”, you will get your useful resource URL and keys. You simply want any one of many two supplied keys.

4. Deploy GPT

Go to Azure OpenAI Studio at https://oai.azure.com/portal and create your deployment below administration > Deployments. Choose gpt-35-turbo-16k or larger.

5. Configure SQL AI Assistant in Hue

Now that the service is up and working along with your mannequin, the final step is to allow and configure the SQL AI assistant in Hue.

- Log in to the Cloudera Information Warehouse service as DWAdmin.

- Go to the digital warehouse tab, find the Digital Warehouse on which you wish to allow this characteristic, and click on “edit.”

- Go to “configurations” > Hue and choose “hue-safety-valve” from the configuration information drop-down menu.

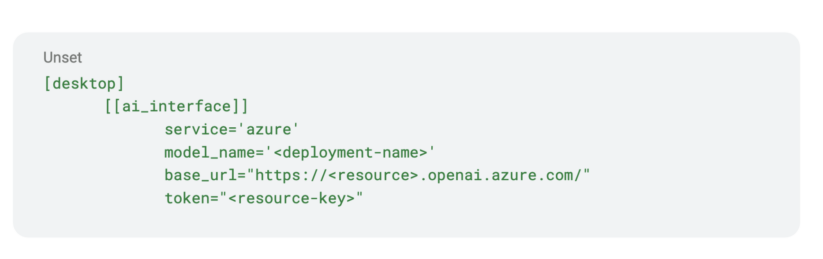

Edit the textual content below the desktop part by including a subsection known as ai_interface. Populate it as proven beneath by changing the angle bracket values with these from your personal service:

Utilizing OpenAI service

1. Open AI platform enroll

Request entry to the Open AI platform out of your IT division or go to https://platform.openai.com/ and create an account if allowed by your organization’s insurance policies.

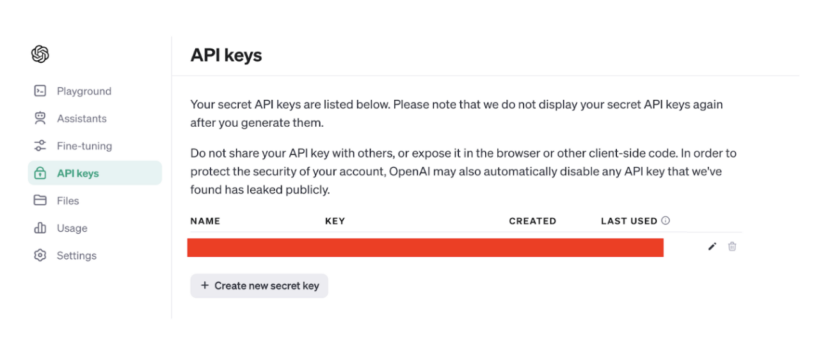

2. Get the API key

Within the left menu bar, navigate to AI keys. You need to have the ability to view present keys or create new ones. The API secret’s the one factor it’s good to combine with the SQL AI Assistant.

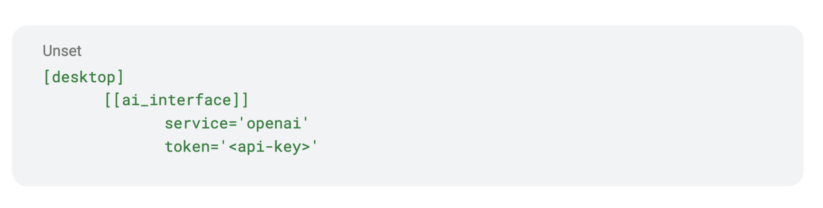

3. Configure SQL AI Assistant in Hue

Lastly, allow and configure the SQL AI assistant in Hue.

- Log in to the information warehouse service as DWAdmin.

- Go to the digital warehouse tab, find the Digital Warehouse on which you wish to allow this characteristic, and click on “edit.”

- Go to “configurations” > Hue and choose “hue-safety-valve” from the configuration information drop-down menu.

- Edit the textual content below the desktop part by including a subsection known as ai_interface. Solely two key worth pairs are wanted as proven beneath. Substitute the <api-key> worth with the API key from Open AI.

Amazon Bedrock Service

Amazon Bedrock is a completely managed service that makes basis fashions from main AI startups and Amazon out there through an API. It’s essential to have an AWS account with Bedrock entry earlier than following these steps.

- Get your entry key and secret

Get the entry key ID and the key entry key for utilizing Bedrock-hosted fashions in Hue Assistant:

- Go to IAM console: https://console.aws.amazon.com/iam

- Click on “customers” within the left menu

- Discover the person who wants entry

- Click on “safety credentials”

- Go to the “entry keys” part and discover your keys there.

2. Get Anthropic Claude entry

Claude from Anthropic is without doubt one of the greatest fashions out there in Bedrock for SQL-related duties. Extra particulars can be found at https://aws.amazon.com/bedrock/claude/. Upon getting entry, it is possible for you to to attempt Claude within the textual content playground below the Amazon Bedrock service.

3. Configure SQL AI Assistant in Hue

Lastly, allow and configure the SQL AI assistant in Hue.

- Log in to the information warehouse service as DWAdmin.

- Go to the digital warehouse tab, find the digital warehouse on which you wish to allow this characteristic, and click on “edit.”

- Go to “configurations: > Hue and choose “hue-safety-valve” from the configuration information drop-down menu.

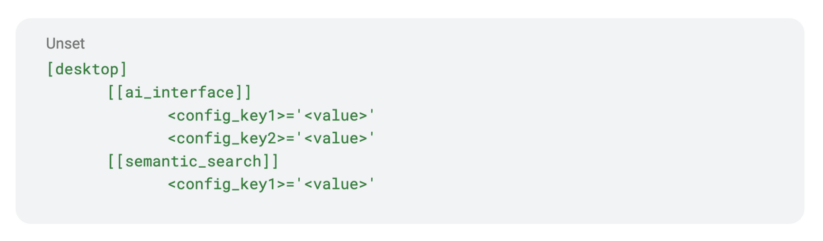

- Edit the textual content to verify the next sections, subsections and key worth pairs are set. Substitute the <access_key> and the <secret_key> with the values out of your AWS account.

Service- and model-related configurations are below ai_interface, and semantic search associated configurations used for RAG are below the semantic_search part.

The configurable LLMs are superb at producing and modifying SQL. The RAG structure supplies the right context. However there isn’t a assure solutions from LLMs, or from human consultants, are at all times correct. Please pay attention to the next:

- Non-deterministic: LLMs are non-deterministic. You can’t assure the very same output for a similar enter each time, and totally different responses for very comparable queries can happen.

- Ambiguity: LLMs could battle to deal with ambiguous queries or contexts. SQL queries typically depend on particular and unambiguous language, however LLMs can misread or generate ambiguous SQL queries, resulting in incorrect outcomes.

- Hallucination: Within the context of LLMs, hallucination refers to a phenomenon the place these fashions generate responses which can be incorrect, nonsensical, or fabricated. Sometimes you would possibly see incorrect identifiers or literals, and even desk and column names, if the supplied context is incomplete or person enter merely doesn’t match any knowledge.

- Partial context: The RAG structure supplies context to every request however it has limitations and there’s no assure the context despatched to the LLM will at all times be full.

The SQL AI Assistant is now out there in tech preview on Cloudera Information Warehouse on Public Cloud. We encourage you to attempt it out and expertise the advantages it may well present in relation to working with SQL. Moreover, take a look at the overview weblog on SQL AI Assistant to be taught the way it might help knowledge and enterprise analysts in your group velocity up knowledge analytics. Attain out to your Cloudera group for extra particulars.