Misplaced within the discuss OpenAI is the large quantity of compute wanted to coach and fine-tune LLMs, like GPT, and Generative AI, like ChatGPT. Every iteration requires extra compute and the limitation imposed by Moore’s Regulation shortly strikes that process from single compute cases to distributed compute. To perform this, OpenAI has employed Ray to energy the distributed compute platform to coach every launch of the GPT fashions. Ray has emerged as a preferred framework due to its superior efficiency over Apache Spark for distributed AI compute workloads. Within the weblog we are going to cowl how Ray can be utilized in Cloudera Machine Studying’s open-by-design structure to deliver quick distributed AI compute to CDP. That is enabled by means of a Ray Module in cmlextensions python bundle printed by our staff.

Ray’s potential to supply easy and environment friendly distributed computing capabilities, together with its native assist for Python, has made it a favourite amongst knowledge scientists and engineers alike. Its progressive structure permits seamless integration with ML and deep studying libraries like TensorFlow and PyTorch. Moreover, Ray’s distinctive method to parallelism, which focuses on fine-grained process scheduling, permits it to deal with a wider vary of workloads in comparison with Spark. This enhanced flexibility and ease of use have positioned Ray because the go-to alternative for organizations seeking to harness the ability of distributed computing.

Constructed on Kubernetes, Cloudera Machine Studying (CML) offers knowledge science groups a platform that works throughout every stage of Machine Studying Lifecycle, supporting exploratory knowledge evaluation, the mannequin improvement and shifting these fashions and purposes to manufacturing (aka MLOps). CML is constructed to be open by design, and that’s the reason it features a Employee API that may shortly spin up a number of compute pods on demand. Cloudera clients are in a position to deliver collectively CML’s potential to spin up giant compute clusters and combine that with Ray to allow a straightforward to make use of, Python native, distributed compute platform. Whereas Ray brings a few of its personal libraries for reinforcement studying, hyper parameter tuning, and mannequin coaching and serving, customers also can deliver their favourite packages like XGBoost, Pytorch, LightGBM, Dask, and Pandas (utilizing Modin). This matches proper in with CML’s open by design, permitting knowledge scientists to have the ability to reap the benefits of the newest improvements coming from the open-source neighborhood.

To make it simpler for CML customers to leverage Ray, Cloudera has printed a Python bundle known as CMLextensions. CMLextensions has a Ray module that manages provisioning compute employees in CML after which returning a Ray cluster to the consumer.

To get began with Ray on CML, first you have to set up the CMLextensions library.

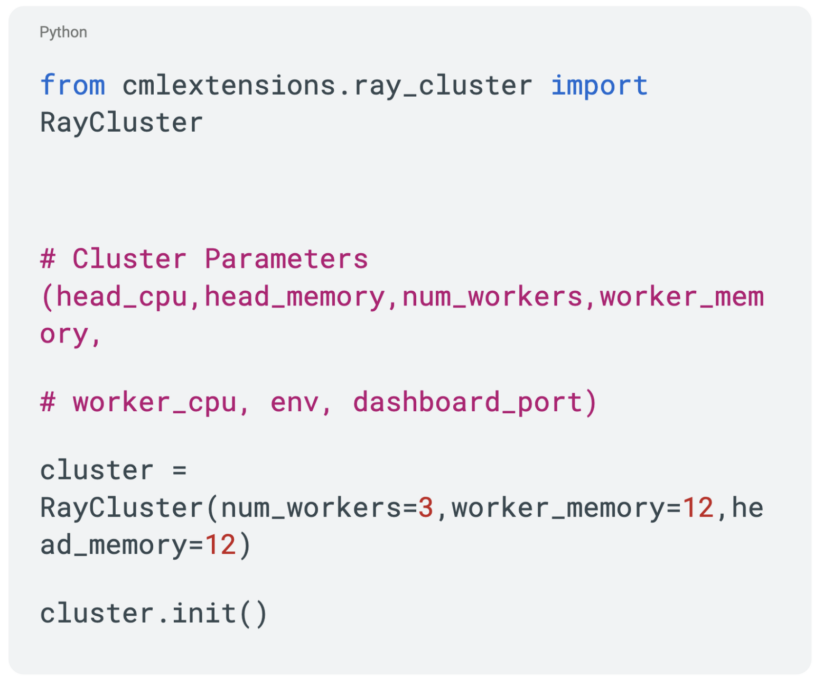

With that in place, we will now spin up a Ray cluster.

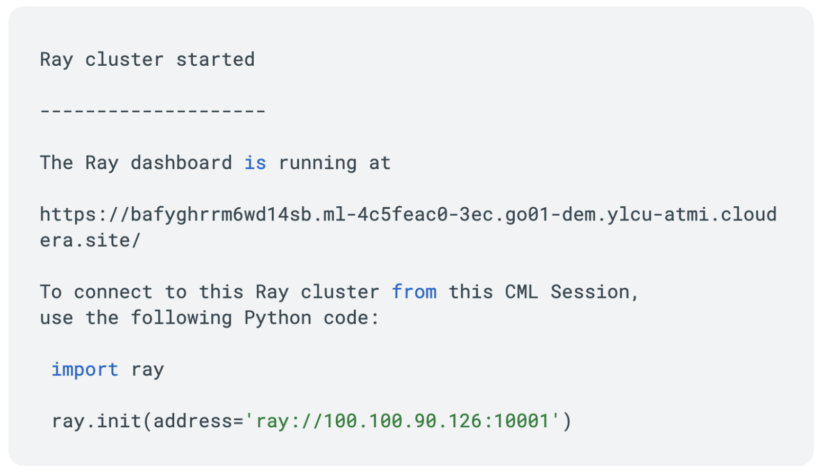

This returns a provisioned Ray cluster.

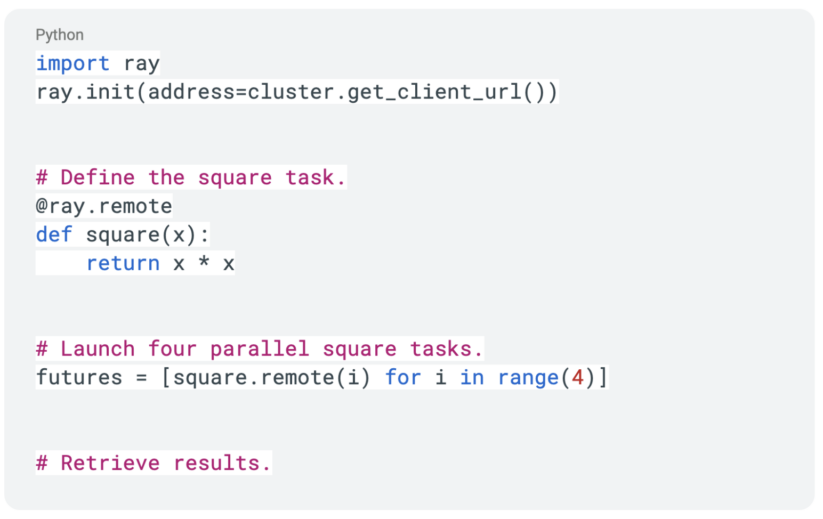

Now now we have a Ray cluster provisioned and we’re able to get to work. We will check out our Ray cluster with the next code:

Lastly, after we are completed with the Ray cluster, we will terminate it with:

Ray lowers the limitations to construct quick and distributed Python purposes. Now we will spin up a Ray cluster in Cloudera Machine Studying. Let’s take a look at how we will parallelize and distribute Python code with Ray. To finest perceive this, we have to take a look at Ray Duties and Actors, and the way the Ray APIs permit you to implement distributed compute.

First, we are going to take a look at the idea of taking an current operate and making it right into a Ray Job. Lets take a look at a easy operate to seek out the sq. of a quantity.

To make this right into a distant operate, all we have to do is use the @ray.distant decorator.

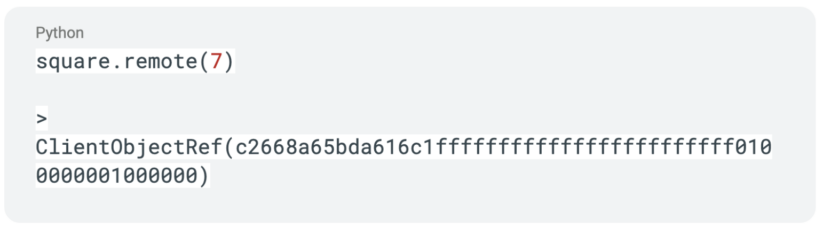

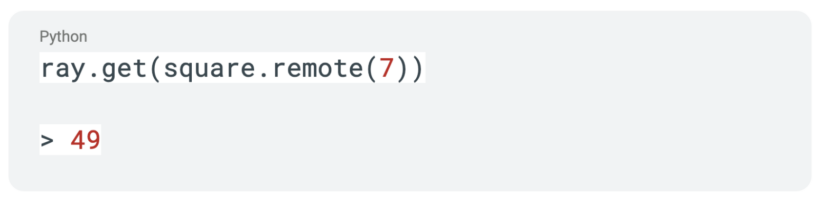

This makes it a distant operate and calling the operate instantly returns a future with the thing reference.

With a view to get the outcome from our operate name, we will use the ray.get API name with the operate which might lead to execution being blocked till the results of the decision is returned.

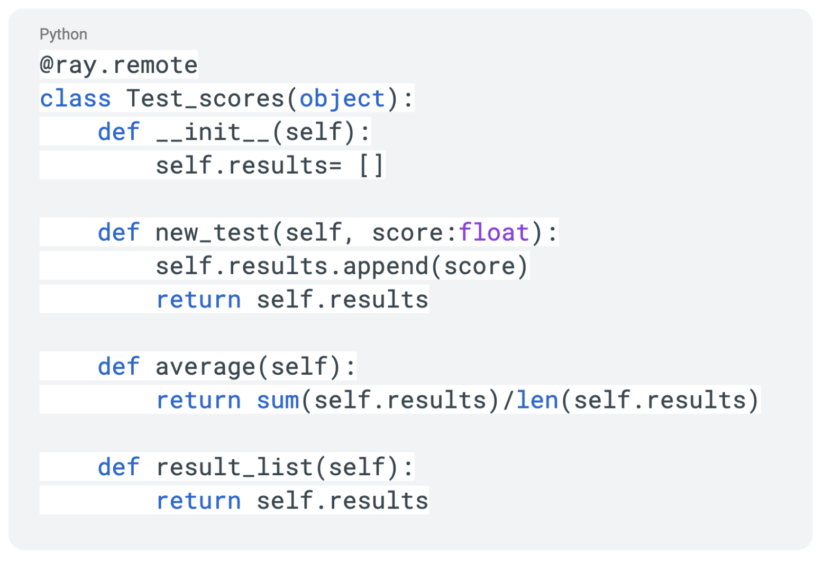

Constructing off of Ray Duties, we subsequent have the idea of Ray Actors to discover. Consider an Actor as a distant class that runs on one in every of our employee nodes. Lets begin with a easy class that tracks check scores. We’ll use that very same @ray.distant decorator which this time turns this class right into a Ray Actor.

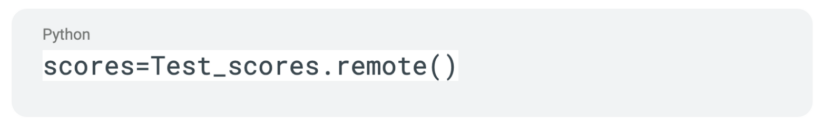

Subsequent, we are going to create an occasion of this Actor.

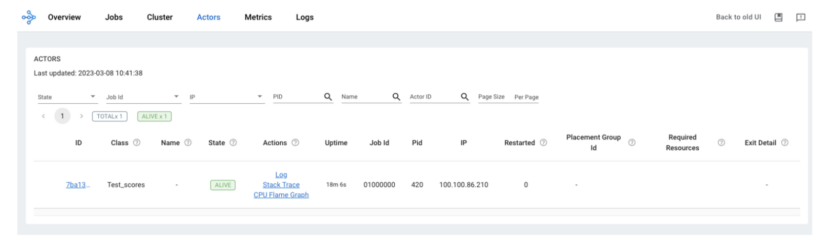

With this Actor deployed, we will now see the occasion within the Ray Dashboard.

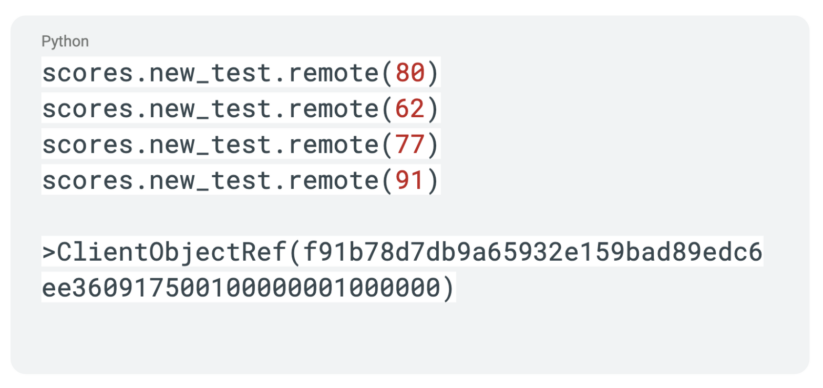

Identical to with Ray Duties, we are going to use the “.distant” extension to make operate calls inside our Ray Actor.

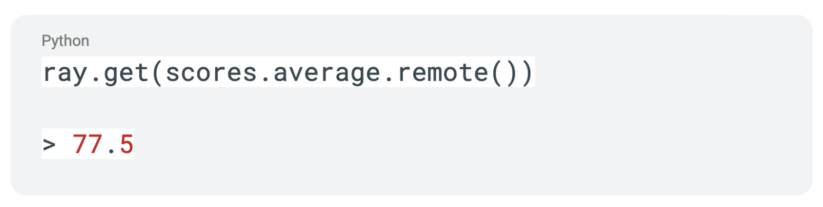

Just like the Ray Job, calls to a Ray Actor will solely lead to an object reference being returned. We will use that very same ray.get api name to dam execution till knowledge is returned.

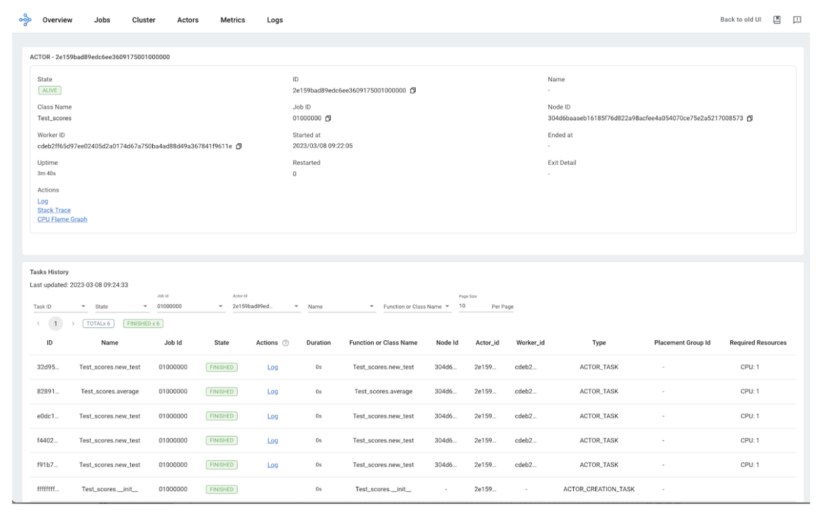

The calls into our Actor additionally grow to be trackable within the Ray Dashboard. Under you will notice our actor, you’ll be able to hint all the calls to that actor, and you’ve got entry to logs for that employee.

An Actor’s lifetime might be indifferent from the present job and permitting it to persist afterwards. By the ray.distant decorator, you’ll be able to specify the useful resource necessities for Actors.

That is only a fast take a look at the Job and Actor ideas in Ray. We’re simply scratching the floor right here however this could give a great basis as we dive deeper into Ray. Within the subsequent installment, we are going to take a look at how Ray turns into the inspiration to distribute and pace up dataframe workloads.

Enterprises of each dimension and trade are experimenting and capitalizing on the innovation with LLMs that may energy a wide range of area particular purposes. Cloudera clients are nicely ready to leverage subsequent era distributed compute frameworks like Ray proper on prime of their knowledge. That is the ability of being open by design.

To be taught extra about Cloudera Machine Studying please go to the web site and to get began with Ray in CML take a look at CMLextensions in our Github.