That is the fourth weblog in our sequence on LLMOps for enterprise leaders. Learn the first, second, and third articles to study extra about LLMOps on Azure AI.

In our LLMOps weblog sequence, we’ve explored varied dimensions of Massive Language Fashions (LLMs) and their accountable use in AI operations. Elevating our dialogue, we now introduce the LLMOps maturity mannequin, an important compass for enterprise leaders. This mannequin is not only a roadmap from foundational LLM utilization to mastery in deployment and operational administration; it’s a strategic information that underscores why understanding and implementing this mannequin is important for navigating the ever-evolving panorama of AI. Take, as an illustration, Siemens’ use of Microsoft Azure AI Studio and immediate stream to streamline LLM workflows to assist help their trade main product lifecycle administration (PLM) resolution Teamcenter and join individuals who discover issues with those that can repair them. This real-world utility exemplifies how the LLMOps maturity mannequin facilitates the transition from theoretical AI potential to sensible, impactful deployment in a posh trade setting.

Exploring utility maturity and operational maturity in Azure

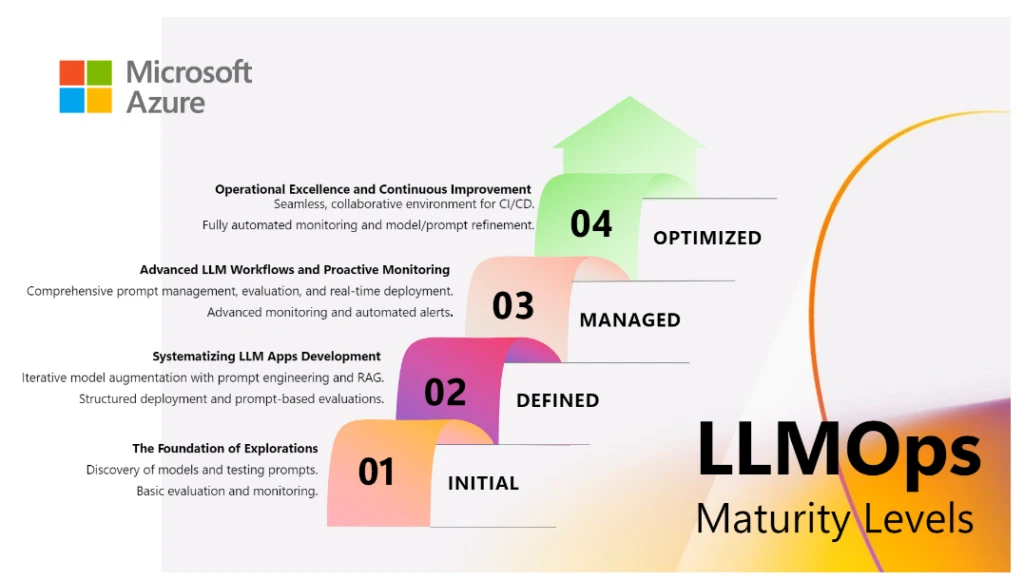

The LLMOps maturity mannequin presents a multifaceted framework that successfully captures two vital points of working with LLMs: the sophistication in utility improvement and the maturity of operational processes.

Utility maturity: This dimension facilities on the development of LLM strategies inside an utility. Within the preliminary levels, the emphasis is positioned on exploring the broad LLM capabilities, typically progressing in direction of extra intricate strategies like fine-tuning and Retrieval Augmented Era (RAG) to satisfy particular wants.

Operational maturity: Whatever the complexity of LLM strategies employed, operational maturity is important for scaling functions. This consists of systematic deployment, strong monitoring, and upkeep methods. The main target right here is on guaranteeing that the LLM functions are dependable, scalable, and maintainable, no matter their degree of sophistication.

This maturity mannequin is designed to replicate the dynamic and ever-evolving panorama of LLM expertise, which requires a steadiness between flexibility and a methodical strategy. This steadiness is essential in navigating the continual developments and exploratory nature of the sector. The mannequin outlines varied ranges, every with its personal rationale and technique for development, offering a transparent roadmap for organizations to reinforce their LLM capabilities.

LLMOps maturity mannequin

Stage One—Preliminary: The inspiration of exploration

At this foundational stage, organizations embark on a journey of discovery and foundational understanding. The main target is predominantly on exploring the capabilities of pre-built LLMs, similar to these supplied by Microsoft Azure OpenAI Service APIs or Fashions as a Service (MaaS) via inference APIs. This part usually includes primary coding expertise for interacting with these APIs, gaining insights into their functionalities, and experimenting with easy prompts. Characterised by handbook processes and remoted experiments, this degree doesn’t but prioritize complete evaluations, monitoring, or superior deployment methods. As an alternative, the first goal is to know the potential and limitations of LLMs via hands-on experimentation, which is essential in understanding how these fashions might be utilized to real-world eventualities.

At corporations like Contoso1, builders are inspired to experiment with a wide range of fashions, together with GPT-4 from Azure OpenAI Service and LLama 2 from Meta AI. Accessing these fashions via the Azure AI mannequin catalog permits them to find out which fashions are only for his or her particular datasets. This stage is pivotal in setting the groundwork for extra superior functions and operational methods within the LLMOps journey.

Stage Two—Outlined: Systematizing LLM app improvement

As organizations change into more adept with LLMs, they begin adopting a scientific technique of their operations. This degree introduces structured improvement practices, specializing in immediate design and the efficient use of several types of prompts, similar to these discovered within the meta immediate templates in Azure AI Studio. At this degree, builders begin to perceive the influence of various prompts on the outputs of LLMs and the significance of accountable AI in generated content material.

An vital device that comes into play right here is Azure AI immediate stream. It helps streamline your complete improvement cycle of AI functions powered by LLMs, offering a complete resolution that simplifies the method of prototyping, experimenting, iterating, and deploying AI functions. At this level, builders begin specializing in responsibly evaluating and monitoring their LLM flows. Immediate stream affords a complete analysis expertise, permitting builders to evaluate functions on varied metrics, together with accuracy and accountable AI metrics like groundedness. Moreover, LLMs are built-in with RAG strategies to drag info from organizational knowledge, permitting for tailor-made LLM options that keep knowledge relevance and optimize prices.

For example, at Contoso, AI builders at the moment are using Azure AI Search to create indexes in vector databases. These indexes are then integrated into prompts to offer extra contextual, grounded and related responses utilizing RAG with immediate stream. This stage represents a shift from primary exploration to a extra centered experimentation, aimed toward understanding the sensible use of LLMs in fixing particular challenges.

Stage Three—Managed: Superior LLM workflows and proactive monitoring

Throughout this stage, the main target shifts to subtle immediate engineering, the place builders work on creating extra advanced prompts and integrating them successfully into functions. This includes a deeper understanding of how totally different prompts affect LLM habits and outputs, resulting in extra tailor-made and efficient AI options.

At this degree, builders harness immediate stream’s enhanced options, similar to plugins and performance callings, for creating refined flows involving a number of LLMs. They’ll additionally handle varied variations of prompts, code, configurations, and environments through code repositories, with the potential to trace modifications and rollback to earlier variations. The iterative analysis capabilities of immediate stream change into important for refining LLM flows, by conducting batch runs, using analysis metrics like relevance, groundedness, and similarity. This enables them to assemble and examine varied metaprompt variations, figuring out which of them yield larger high quality outputs that align with their enterprise goals and accountable AI pointers.

As well as, this stage introduces a extra systematic strategy to stream deployment. Organizations begin implementing automated deployment pipelines, incorporating practices similar to steady integration/steady deployment (CI/CD). This automation enhances the effectivity and reliability of deploying LLM functions, marking a transfer in direction of extra mature operational practices.

Monitoring and upkeep additionally evolve throughout this stage. Builders actively observe varied metrics to make sure strong and accountable operations. These embody high quality metrics like groundedness and similarity, in addition to operational metrics similar to latency, error price, and token consumption, alongside content material security measures.

At this stage in Contoso, builders think about creating numerous immediate variations in Azure AI immediate stream, refining them for enhanced accuracy and relevance. They make the most of superior metrics like Query and Answering (QnA) Groundedness and QnA Relevance throughout batch runs to consistently assess the standard of their LLM flows. After assessing these flows, they use the immediate stream SDK and CLI for packaging and automating deployment, integrating seamlessly with CI/CD processes. Moreover, Contoso improves its use of Azure AI Search, using extra refined RAG strategies to develop extra advanced and environment friendly indexes of their vector databases. This ends in LLM functions that aren’t solely faster in response and extra contextually knowledgeable, but in addition cheaper, decreasing operational bills whereas enhancing efficiency.

Stage 4—Optimized: Operational excellence and steady enchancment

On the pinnacle of the LLMOps maturity mannequin, organizations attain a stage the place operational excellence and steady enchancment are paramount. This part options extremely refined deployment processes, underscored by relentless monitoring and iterative enhancement. Superior monitoring options provide deep insights into LLM functions, fostering a dynamic technique for steady mannequin and course of enchancment.

At this superior stage, Contoso’s builders interact in advanced immediate engineering and mannequin optimization. Using Azure AI’s complete toolkit, they construct dependable and extremely environment friendly LLM functions. They fine-tune fashions like GPT-4, Llama 2, and Falcon for particular necessities and arrange intricate RAG patterns, enhancing question understanding and retrieval, thus making LLM outputs extra logical and related. They repeatedly carry out large-scale evaluations with refined metrics assessing high quality, value, and latency, guaranteeing thorough analysis of LLM functions. Builders may even use an LLM-powered simulator to generate artificial knowledge, similar to conversational datasets, to judge and enhance the accuracy and groundedness. These evaluations, performed at varied levels, embed a tradition of steady enhancement.

For monitoring and upkeep, Contoso adopts complete methods incorporating predictive analytics, detailed question and response logging, and tracing. These methods are aimed toward enhancing prompts, RAG implementations, and fine-tuning. They implement A/B testing for updates and automatic alerts to establish potential drifts, biases, and high quality points, aligning their LLM functions with present trade requirements and moral norms.

The deployment course of at this stage is streamlined and environment friendly. Contoso manages your complete lifecycle of LLMOps functions, encompassing versioning and auto-approval processes primarily based on predefined standards. They constantly apply superior CI/CD practices with strong rollback capabilities, guaranteeing seamless updates to their LLM functions.

At this part, Contoso stands as a mannequin of LLMOps maturity, showcasing not solely operational excellence but in addition a steadfast dedication to steady innovation and enhancement within the LLM area.

Determine the place you’re within the journey

Every degree of the LLMOps maturity mannequin represents a strategic step within the journey towards production-level LLM functions. The development from primary understanding to classy integration and optimization encapsulates the dynamic nature of the sector. It acknowledges the necessity for steady studying and adaptation, guaranteeing that organizations can harness the transformative energy of LLMs successfully and sustainably.

The LLMOps maturity mannequin affords a structured pathway for organizations to navigate the complexities of implementing and scaling LLM functions. By understanding the excellence between utility sophistication and operational maturity, organizations could make extra knowledgeable choices about tips on how to progress via the degrees of the mannequin. The introduction of Azure AI Studio that encapsulated immediate stream, mannequin catalog, and the Azure AI Search integration into this framework underscores the significance of each cutting-edge expertise and strong operational methods in attaining success with LLMs.

Be taught extra

Discover Azure AI Studio

Construct, consider, and deploy your AI options all inside one area

- Contoso is a fictional however consultant international group constructing generative AI functions.