“A quick-moving expertise area the place new instruments, applied sciences and platforms are launched very steadily and the place it is vitally arduous to maintain up with new traits.” I may very well be describing both the VR house or Information Engineering, however in actual fact this publish is concerning the intersection of each.

Digital Actuality – The Subsequent Frontier in Media

I work as a Information Engineer at a number one firm within the VR house, with a mission to seize and transmit actuality in good constancy. Our content material varies from on-demand experiences to dwell occasions like NBA video games, comedy exhibits and music live shows. The content material is distributed via each our app, for a lot of the VR headsets out there, and in addition by way of Oculus Venues.

From a content material streaming perspective, our use case just isn’t very totally different from another streaming platform. We ship video content material via the Web; customers can open our app and flick thru totally different channels and choose which content material they wish to watch. However that’s the place the similarities finish; from the second customers put their headsets on, we get their full consideration. In a conventional streaming software, the content material will be streaming within the gadget however there isn’t any option to know if the person is definitely paying consideration and even wanting on the gadget. In VR, we all know precisely when a person is actively consuming content material.

Streams of VR Occasion Information

One integral a part of our immersive expertise providing is dwell occasions. The principle distinction with conventional video-on-demand content material is that these experiences are streamed dwell solely during the occasion. For instance, we stream dwell NBA video games to most VR headsets out there. Reside occasions carry a special set of challenges in each the technical elements (cameras, video compression, encoding) and the info they generate from person conduct.

Each person interplay in our app generates a person occasion that’s despatched to our servers: app opening, scrolling via the content material, choosing a selected content material to test the outline and title, opening content material and beginning to watch, stopping content material, fast-forwarding, exiting the app. Even whereas watching content material, the app generates a “beacon” occasion each few seconds. This uncooked knowledge from the gadgets must be enriched with content material metadata and geolocation data earlier than it may be processed and analyzed.

VR is an immersive platform so customers can not simply look away when a selected piece of content material just isn’t attention-grabbing to them; they will both preserve watching, swap to totally different content material or—within the worst-case situation—even take away their headsets. Understanding what content material generates essentially the most participating conduct from the customers is vital for content material technology and advertising functions. For instance, when a person enters our software, we wish to know what drives their consideration. Are they fascinated about a selected sort of content material, or simply searching the totally different experiences? As soon as they determine what they wish to watch, do they keep within the content material for all the period or do they only watch a number of seconds? After watching a selected sort of content material (sports activities or comedy), do they preserve watching the identical form of content material? Are customers from a selected geographic location extra fascinated about a selected sort of content material? What concerning the market penetration of the totally different VR platforms?

From a knowledge engineering perspective, this can be a traditional situation of clickstream knowledge, with a VR headset as a substitute of a mouse. Giant quantities of knowledge from person conduct are generated from the VR gadget, serialized in JSON format and routed to our backend programs the place knowledge is enriched, pre-processed and analyzed in each actual time and batch. We wish to know what’s going on in our platform at this very second and we additionally wish to know the totally different traits and statistics from this week, final month or the present yr for instance.

The Want for Operational Analytics

The clickstream knowledge situation has some well-defined patterns with confirmed choices for knowledge ingestion: streaming and messaging programs like Kafka and Pulsar, knowledge routing and transformation with Apache NiFi, knowledge processing with Spark, Flink or Kafka Streams. For the info evaluation half, issues are fairly totally different.

There are a number of totally different choices for storing and analyzing knowledge, however our use case has very particular necessities: real-time, low-latency analytics with quick queries on knowledge and not using a mounted schema, utilizing SQL because the question language. Our conventional knowledge warehouse answer provides us good outcomes for our reporting analytics, however doesn’t scale very effectively for real-time analytics. We have to get data and make choices in actual time: what’s the content material our customers discover extra participating, from what elements of the world are they watching, how lengthy do they keep in a selected piece of content material, how do they react to commercials, A/B testing and extra. All this data may help us drive an much more participating platform for VR customers.

A greater rationalization of our use case is given by Dhruba Borthakur in his six propositions of Operational Analytics:

- Complicated queries

- Low knowledge latency

- Low question latency

- Excessive question quantity

- Reside sync with knowledge sources

- Combined sorts

Our queries for dwell dashboards and actual time analytics are very advanced, involving joins, subqueries and aggregations. Since we’d like the knowledge in actual time, low knowledge latency and low question latency are vital. We consult with this as operational analytics, and such a system should assist all these necessities.

Design for Human Effectivity

A further problem that in all probability most different small firms face is the best way knowledge engineering and knowledge evaluation groups spend their time and assets. There are loads of superior open-source tasks within the knowledge administration market – particularly databases and analytics engines – however as knowledge engineers we wish to work with knowledge, not spend our time doing DevOps, putting in clusters, establishing Zookeeper and monitoring tens of VMs and Kubernetes clusters. The precise stability between in-house growth and managed companies helps firms concentrate on revenue-generating duties as a substitute of sustaining infrastructure.

For small knowledge engineering groups, there are a number of issues when choosing the proper platform for operational analytics:

- SQL assist is a key issue for fast growth and democratization of the knowledge. We do not have time to spend studying new APIs and constructing instruments to extract knowledge, and by exposing our knowledge via SQL we allow our Information Analysts to construct and run queries on dwell knowledge.

- Most analytics engines require the info to be formatted and structured in a particular schema. Our knowledge is unstructured and generally incomplete and messy. Introducing one other layer of knowledge cleaning, structuring and ingestion may also add extra complexity to our pipelines.

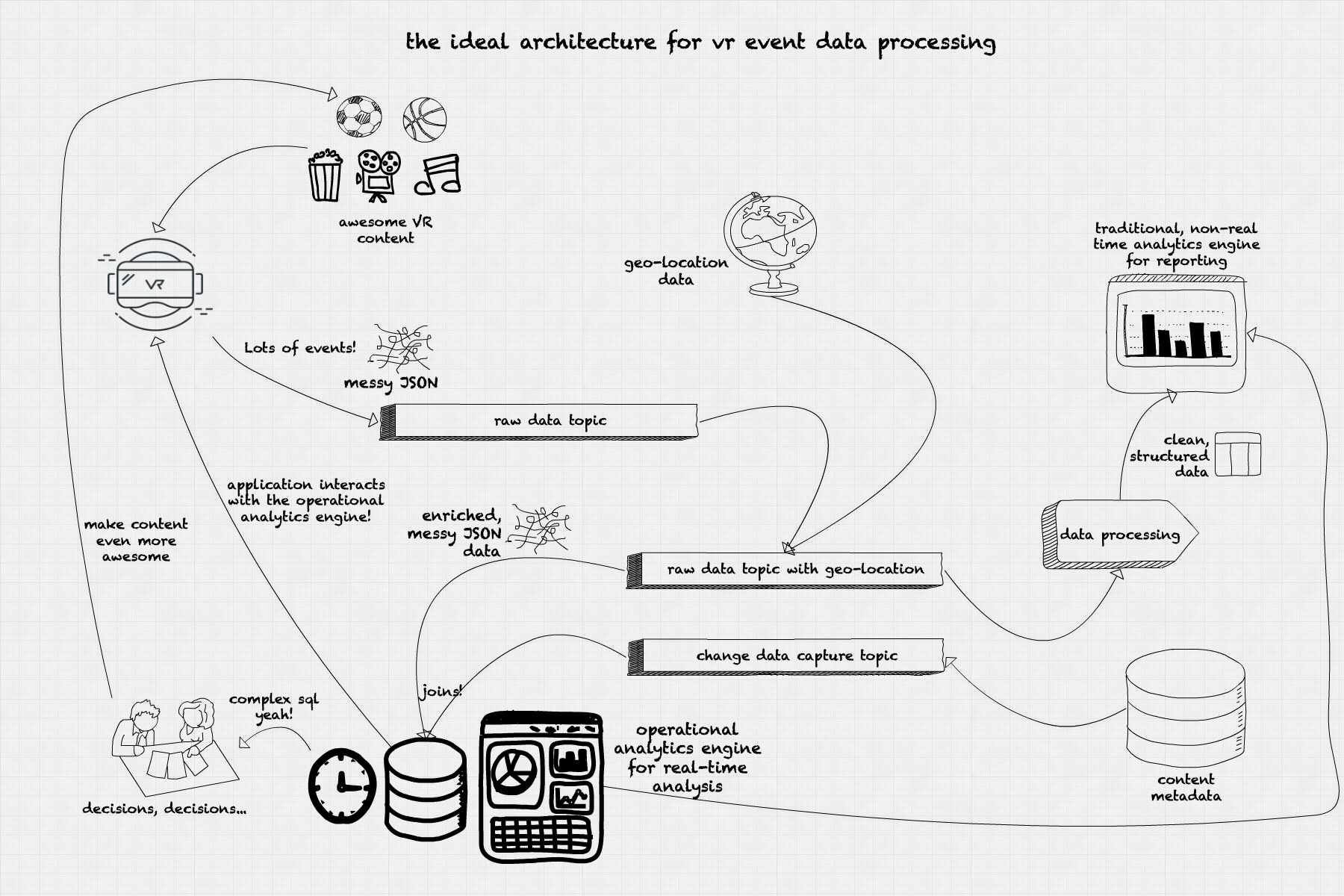

Our Excellent Structure for Operational Analytics on VR Occasion Information

Information and Question Latency

How are our customers reacting to particular content material? Is that this commercial too invasive that customers cease watching the content material? Are customers from a selected geography consuming extra content material at present? What platforms are main the content material consumption now? All these questions will be answered by operational analytics. Good operational analytics would enable us to investigate the present traits in our platform and act accordingly, as within the following situations:

Is that this content material getting much less traction in particular geographies? We are able to add a promotional banner on our app focused to that particular geography.

Is that this commercial so invasive that’s inflicting customers to cease watching our content material? We are able to restrict the looks fee or change the dimensions of the commercial on the fly.

Is there a big variety of previous gadgets accessing our platform for a selected content material? We are able to add content material with decrease definition to provide these customers a greater expertise.

These use circumstances have one thing in widespread: the necessity for a low-latency operational analytics engine. All these questions should be answered in a spread from milliseconds to a couple seconds.

Concurrency

Along with this, our use mannequin requires a number of concurrent queries. Completely different strategic and operational areas want totally different solutions. Advertising departments could be extra fascinated about numbers of customers per platform or area; engineering would wish to know the way a selected encoding impacts the video high quality for dwell occasions. Executives would wish to see what number of customers are in our platform at a selected cut-off date throughout a dwell occasion, and content material companions would have an interest within the share of customers consuming their content material via our platform. All these queries should run concurrently, querying the info in numerous codecs, creating totally different aggregations and supporting a number of totally different real-time dashboards. Every role-based dashboard will current a special perspective on the identical set of knowledge: operational, strategic, advertising.

Actual-Time Choice-Making and Reside Dashboards

To be able to get the info to the operational analytics system shortly, our preferrred structure would spend as little time as potential munging and cleansing knowledge. The information come from the gadgets in JSON format, with a number of IDs figuring out the gadget model and mannequin, the content material being watched, the occasion timestamp, the occasion sort (beacon occasion, scroll, clicks, app exit), and the originating IP. All knowledge is nameless and solely identifies gadgets, not the particular person utilizing it. The occasion stream is ingested into our system in a publish/subscribe system (Kafka, Pulsar) in a selected subject for uncooked incoming knowledge. The information comes with an IP handle however with no location knowledge. We run a fast knowledge enrichment course of that attaches geolocation knowledge to our occasion and publishes to a different subject for enriched knowledge. The quick enrichment-only stage doesn’t clear any knowledge since we wish this knowledge to be ingested quick into the operational analytics engine. This enrichment will be carried out utilizing specialised instruments like Apache NiFi and even stream processing frameworks like Spark, Flink or Kafka Streams. On this stage it’s also potential to sessionize the occasion knowledge utilizing windowing with timeouts, establishing whether or not a selected person remains to be within the platform based mostly on the frequency (or absence) of the beacon occasions.

A second ingestion path comes from the content material metadata database. The occasion knowledge should be joined with the content material metadata to transform IDs into significant data: content material sort, title, and period. The choice to affix the metadata within the operational analytics engine as a substitute of throughout the knowledge enrichment course of comes from two components: the necessity to course of the occasions as quick as potential, and to dump the metadata database from the fixed level queries wanted for getting the metadata for a selected content material. By utilizing the change knowledge seize from the unique content material metadata database and replicating the info within the operational analytics engine we obtain two targets: keep a separation between the operational and analytical operations in our system, and in addition use the operational analytics engine as a question endpoint for our APIs.

As soon as the info is loaded within the operational analytics engine, we use visualization instruments like Tableau, Superset or Redash to create interactive, real-time dashboards. These dashboards are up to date by querying the operational analytics engine utilizing SQL and refreshed each few seconds to assist visualize the modifications and traits from our dwell occasion stream knowledge.

The insights obtained from the real-time analytics assist make choices on the best way to make the viewing expertise higher for our customers. We are able to determine what content material to advertise at a selected cut-off date, directed to particular customers in particular areas utilizing a selected headset mannequin. We are able to decide what content material is extra participating by inspecting the common session time for that content material. We are able to embrace totally different visualizations in our app, carry out A/B testing and get ends in actual time.

Conclusion

Operational analytics permits enterprise to make choices in actual time, based mostly on a present stream of occasions. This sort of steady analytics is essential to understanding person conduct in platforms like VR content material streaming at a worldwide scale, the place choices will be made in actual time on data like person geolocation, headset maker and mannequin, connection velocity, and content material engagement. An operational analytics engine providing low-latency writes and queries on uncooked JSON knowledge, with a SQL interface and the power to work together with our end-user API, presents an infinite variety of potentialities for serving to make our VR content material much more superior!