That is half two of a multi-part weblog collection on AI. Half one, Why 2024 is the Yr of AI for Networking, mentioned Cisco’s AI networking imaginative and prescient and technique. This weblog will deal with evolving information middle community infrastructure for supporting AI/ML workloads, whereas the subsequent weblog will talk about the Cisco compute technique and improvements for mainstreaming AI.

As mentioned partially one of many weblog collection, Synthetic intelligence (AI) and machine studying (ML) have just lately skilled a steep funding trajectory just lately, catapulted by generative AI. This has opened up new alternatives to ship actionable insights and real-world problem-solving capabilities.

Generative AI requires a major quantity of processing energy and better networking efficiency to ship outcomes quickly. Hyperscalers have led the AI revolution with mass-scale infrastructure utilizing hundreds of graphics processing models (GPUs) to course of petabytes of knowledge for AI workloads, corresponding to coaching fashions. Many organizations, together with enterprise, public sector, service suppliers, and Tier 2 web-scalers, are exploring or beginning to use generative AI with coaching and inference fashions.

To course of AI/ML workloads or jobs that contain giant information units, it’s essential to distribute them throughout a number of GPUs in an AI/ML cluster. This helps stability the load by parallel processing and ship high-quality outcomes shortly. To realize this, it’s important to have a high-performance community that helps non-blocking, low-latency, lossless material. With out such a community, latency or packet drops could cause studying jobs to take for much longer to finish, or could not full in any respect. Equally, when operating AI inferencing in edge information facilities, it is important to have a strong community to ship real-time insights to a lot of end-users.

Why Ethernet?

The inspiration for many networks at this time is Ethernet, which has developed from use in 10Mbps LANs to WANs with 400GbE ports. Ethernet’s adaptability has allowed it to scale and evolve to fulfill new calls for, together with these of AI. It has efficiently overcome challenges corresponding to scaling previous DS1, DS3, and SONET speeds, whereas sustaining the standard of service for voice and video site visitors. This adaptability and resilience have allowed Ethernet to outlast options corresponding to Token Ring, ATM, and body relay.

To assist enhance throughput and decrease compute and storage site visitors latency, the distant direct reminiscence entry (RDMA) over Converged Ethernet (RoCE) community protocol is used to assist distant entry to reminiscence on a distant host with out CPU involvement. Ethernet materials with RoCEv2 protocol assist are optimized for AI/ML clusters with extensively adopted standards-based expertise, simpler migration for Ethernet-based information facilities, confirmed scalability at decrease cost-per-bit, and designed with superior congestion administration to assist intelligently management latency and loss.

In line with the Dell’oro Group, AI networks will act as a catalyst to speed up the transition to increased speeds. Market demand from “Tier 2/3 and enormous enterprises are forecast to be vital, approaching $10 B over the subsequent 5 years,” and they’re anticipated to choose Ethernet.

Why Cisco AI infrastructure?

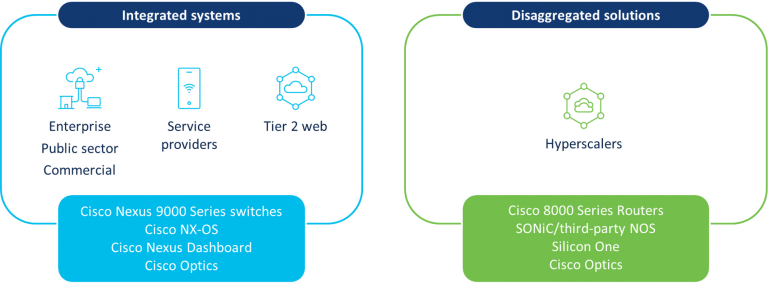

We’ve made vital investments in our information middle networking portfolio for AI infrastructure throughout platforms, software program, silicon, and optics. This embrace Cisco Nexus 9000 Sequence switches, Cisco 8000 Sequence Routers, Cisco Silicon One, community working programs (NOSs), administration, and Cisco Optics (see Determine 1).

Determine 1. Cisco AI/ML information middle infrastructure options

This portfolio is designed for information middle Ethernet networks transporting AI/ML workloads, corresponding to operating inference fashions on Cisco unified computing system (UCS) servers. Prospects want selections, which is why we’re offering flexibility with completely different choices.

Cisco Nexus 9000 Sequence switches are built-in options that ship high-throughput and supply congestion administration to assist scale back latency and site visitors drops throughout AI/ML clusters. Cisco Nexus Dashboard helps view and analyze telemetry, and might help shortly configure AI/ML networks with automation, together with congestion parameters, ports, and including leaf/backbone switches. This resolution offers AI/ML prepared networks for purchasers to fulfill the important thing necessities, with a blueprint for community infrastructure and operations.

Cisco 8000 Sequence Routers assist disaggregation for information middle use instances requiring high-capacity open platforms utilizing Ethernet—corresponding to AI/ML clusters within the hyperscaler phase. For these use instances, the NOS on the Cisco 8000 Sequence Routers will be third-party or Software program for Open Networking within the Cloud (SONiC), which is community-supported and designed for purchasers needing an open-source resolution. Cisco 8000 Sequence Routers additionally assist IOS XR software program for different information middle routing use instances, together with super-spine, information middle interconnect, and WAN.

Our options portfolio leverages Cisco Silicon One, which is Cisco chip innovation based mostly on a unified structure that delivers high-performance with useful resource effectivity. Cisco Silicon One is optimized for latency management with AI/ML clusters utilizing Ethernet, telemetry-assisted Ethernet, or absolutely scheduled material. Cisco Optics allow excessive throughput on Cisco routers and switches, scaling as much as 800G per port to assist meet the calls for of AI infrastructure.

We’re additionally serving to clients with their budgetary and sustainability objectives by {hardware} and software program innovation. For instance, system scalability and Cisco Silicon One energy effectivity assist scale back the quantity of sources required for AI/ML interconnects. Prospects can entry community visibility into precise utilization of energy and carbon footprint corresponding to KWh, value, and CO2 emissions by way of Cisco Nexus Dashboard Insights.

With this AI/ML infrastructure options portfolio, Cisco helps clients ship high-quality experiences for his or her end-users with quick insights, by sustainable, high-performance AI/ML Ethernet materials which are clever and operationally environment friendly.

Is my information middle able to assist AI/ML functions?

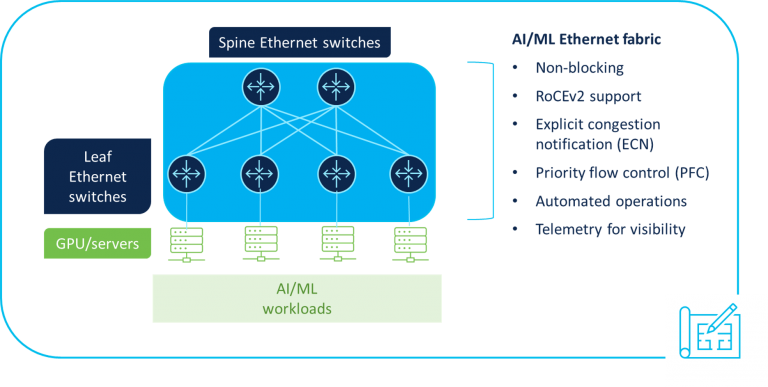

Information middle architectures must be designed correctly to assist AI/ML workloads. To assist clients accomplish this objective, we utilized our intensive information middle networking expertise to create a information middle networking blueprint for AI/ML functions (see Determine 2), which discusses :

- Construct automated, scalable, low-latency, Ethernet networks with assist for lossless transport, utilizing congestion administration mechanisms corresponding to specific congestion notification (ECN) and precedence stream management (PFC) to assist RoCEv2 transport for GPU memory-to-memory switch of data.

- Design a non-blocking community to additional enhance efficiency and allow quicker completion charges of AI/ML jobs.

- Rapidly automate configuration of the AI/ML community material, together with congestion administration parameters for quality-of-service (QoS) management.

- Obtain completely different ranges of visibility into the community by telemetry to assist shortly troubleshoot points and enhance transport efficiency, corresponding to real-time congestion statistics that may assist establish methods to tune the community.

- Leverage the Cisco Validated Design for Information Middle Community Blueprint for AI/ML, which incorporates configuration examples as greatest practices on constructing AI/ML infrastructure.

Determine 2. Cisco AI information middle networking blueprint

How do I get began?

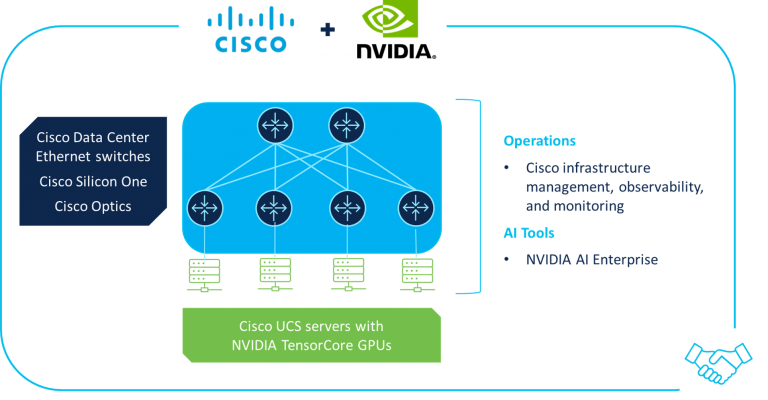

Evolving to a next-gen information middle might not be easy for all clients, which is why Cisco is collaborating with NVIDIA® to ship AI infrastructure options for the info middle which are simple to deploy and handle by enterprises, public sector organizations, and repair suppliers (see Determine 3).

Determine 3. Cisco/NVIDIA partnership

By combining industry-leading applied sciences from Cisco and NVIDIA, built-in options embrace:

- Cisco information middle Ethernet infrastructure: Cisco Nexus 9000 Sequence switches and Cisco 8000 Sequence Routers, together with Cisco Optics and Cisco Silicon One, for high-performance AI/ML information middle community materials that management latency and loss to allow higher experiences with well timed outcomes for AI/ML workloads

- Cisco Compute: M7 era of UCS rack and blade servers allow optimum compute efficiency throughout a broad array of AI and data-intensive workloads within the information middle and on the edge

- Infrastructure administration and operations: Cisco Networking Cloud with Cisco Nexus Dashboard and Cisco Intersight, digital expertise monitoring with Cisco ThousandEyes, and cross-domain telemetry analytics with the Cisco Observability Platform

- NVIDIA Tensor Core GPUs: Newest-generation processors optimized for AI/ML workloads, utilized in UCS rack and blade servers

- NVIDIA BlueField-3 SuperNICs: Objective-built community accelerators for contemporary AI workloads, offering high-performance community connectivity between GPU servers

- NVIDIA BlueField-3 information processing models (DPUs): Cloud infrastructure processors for offloading, accelerating, and isolating software-defined networking, storage, safety, and administration features, considerably enhancing information middle efficiency, effectivity, and safety

- NVIDIA AI Enterprise: Software program frameworks, pretrained fashions, and improvement instruments, in addition to new NVIDIA NIM microservices, for safer, steady, and supported manufacturing AI

- Cisco Validated Designs: Validated reference architectures designed assist to simplify deployment and administration of AI clusters at any scale in a variety of use instances spanning virtualized and containerized environments, with each converged and hyperconverged choices

- Companions: Cisco’s international ecosystem of companions might help advise, assist, and information clients in evolving their information facilities to assist AI/ML functions

Main the way in which

Cisco’s collaboration with NVIDIA goes past promoting current options by Cisco sellers/companions, as extra technological integrations are deliberate. Via these improvements and dealing with NVIDIA, we’re serving to enterprise, public sector, service supplier and web-scale clients on the info middle journeys to totally enabled AI/ML infrastructures, together with for coaching and inference fashions.

We’ll be at NVIDIA GTC, a world AI convention operating March 18–21, so go to us at Sales space #1535 to be taught extra.

Within the subsequent weblog of this collection, Jeremy Foster, SVP/GM, Cisco Compute, will focus on the Cisco Compute technique and improvements for mainstreaming AI.

Discover out extra from the press launch

Share: