We’re thrilled to announce Unity Catalog Lakeguard, which lets you run Apache Spark™ workloads in SQL, Python, and Scala with full information governance on the Databricks Knowledge Intelligence Platform’s cost-efficient, multi-user compute. To implement governance, historically, you had to make use of single-user clusters, which provides price and operational overhead. With Lakeguard, person code runs in full isolation from every other customers’ code and the Spark engine on shared compute, thus implementing information governance at runtime. This lets you securely share clusters throughout your groups, decreasing compute price and minimizing operational toil.

Lakeguard has been an integral a part of Unity Catalog since its introduction: we steadily expanded the capabilities to run arbitrary code on shared clusters, with Python UDFs in DBR 13.1, Scala help in DBR 13.3 and at last, Scala UDFs with DBR 14.3. Python UDFs in Databricks SQL warehouses are additionally secured by Lakegaurd! With that, Databricks prospects can run workloads in SQL, Python and Scala together with UDFs on multi-user compute with full information governance.

On this weblog publish, we give an in depth overview of Unity Catalog’s Lakeguard and the way it enhances Apache Spark™ with information governance.

Lakeguard enforces information governance for Apache Spark™

Apache Spark is the world’s hottest distributed information processing framework. As Spark utilization grows alongside enterprises’ give attention to information, so does the necessity for information governance. For instance, a standard use case is to restrict the visibility of knowledge between completely different departments, resembling finance and HR, or safe PII information utilizing fine-grained entry controls resembling views or column and row-level filters on tables. For Databricks prospects, Unity Catalog presents complete governance and lineage for all tables, views, and machine studying fashions on any cloud.

As soon as information governance is outlined in Unity Catalog, governance guidelines have to be enforced at runtime. The largest technical problem is that Spark doesn’t provide a mechanism for isolating person code. Totally different customers share the identical execution surroundings, the Java Digital Machine (JVM), opening up a possible path for leaking information throughout customers. Cloud-hosted Spark providers get round this downside by creating devoted per-user clusters, which carry two main issues: elevated infrastructure prices and elevated administration overhead since directors must outline and handle extra clusters. Moreover, Spark has not been designed with fine-grained entry management in thoughts: when querying a view, Spark “overfetches” recordsdata, i.e fetches all recordsdata of the underlying tables utilized by the view. As a consequence, customers might doubtlessly learn information they haven’t been granted entry to.

At Databricks, we solved this downside with shared clusters utilizing Lakeguard below the hood. Lakeguard transparently enforces information governance on the compute degree, guaranteeing that every person’s code runs in full isolation from every other person’s code and the underlying Spark engine. Lakeguard can be used to isolate Python UDFs within the Databricks SQL warehouse. With that, Databricks is the industry-first and solely platform that helps safe sharing of compute for SQL, Python and Scala workloads with full information governance, together with enforcement of fine-grained entry management utilizing views and column-level & row-level filters.

Lakeguard: Isolating person code with state-of-the-art sandboxing

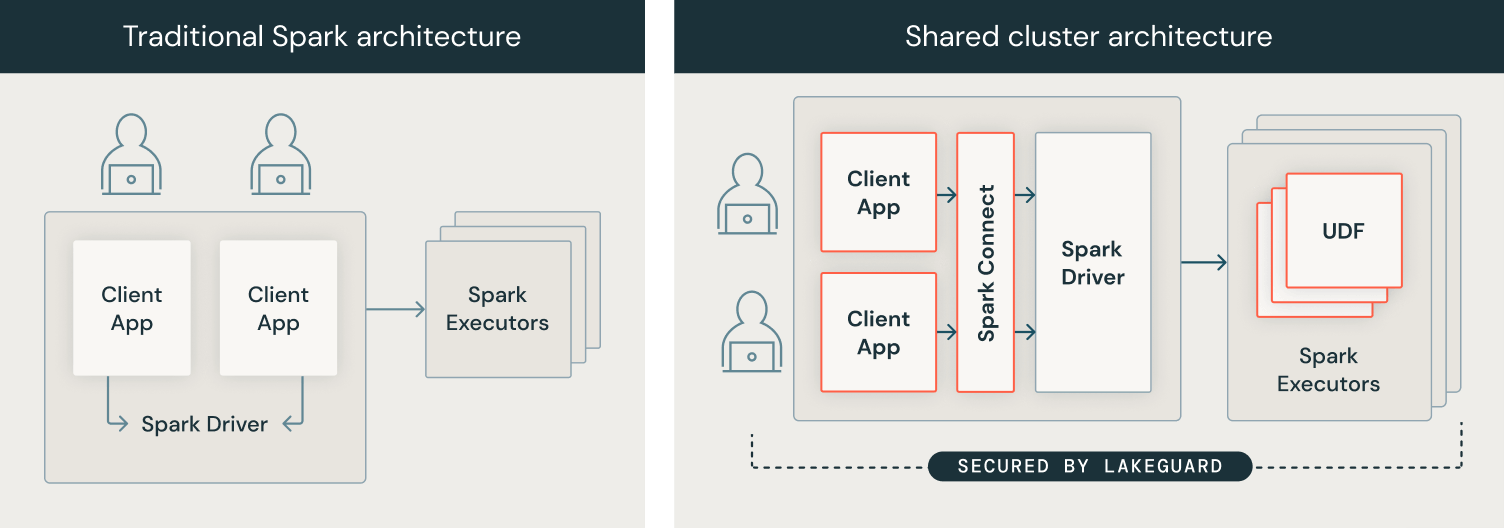

To implement information governance on the compute degree, we developed our compute structure from a safety mannequin the place customers share a JVM to a mannequin the place every person’s code runs in full isolation from one another and the underlying Spark engine in order that information governance is all the time enforced. We achieved this by isolating all person code from (1) the Spark driver and (2) the Spark executors. The picture under exhibits how within the conventional Spark structure (left) customers’ consumer functions share a JVM with privileged entry to the underlying machine, whereas with Shared Clusters (proper), all person code is totally remoted utilizing safe containers. With this structure, Databricks securely runs a number of workloads on the identical cluster, providing a collaborative, cost-efficient, and safe answer.

Spark Consumer: Person code isolation with Spark Join and sandboxed consumer functions

To isolate the consumer functions from the Spark driver, we needed to first decouple the 2 after which isolate the person consumer functions from one another and the underlying machine, with the aim of introducing a completely trusted and dependable boundary between particular person customers and Spark:

- Spark Join: To realize person code isolation on the consumer facet, we use Spark Join that was open-sourced in Apache Spark 3.4. Spark Join was launched to decouple the consumer software from the driving force in order that they not share the identical JVM or classpath, and might be developed and run independently, main to raised stability, upgradability and enabling distant connectivity. Through the use of this decoupled structure, we will implement fine-grained entry management, as “over-fetched” information used to course of queries over views or tables with row-level/column-level filters can not be accessed from the consumer software.

- Sandboxing consumer functions: As a subsequent step, we enforced that particular person consumer functions, i.e. person code, couldn’t entry one another’s information or the underlying machine. We did this by constructing a light-weight sandboxed execution surroundings for consumer functions utilizing state-of-the-art sandboxing methods based mostly on containers. In the present day, every consumer software runs in full isolation in its personal container.

Spark Executors: Sandboxed executor isolation for UDFs

Equally to the Spark driver, Spark executors don’t implement isolation of user-defined capabilities (UDF). For instance, a Scala UDF might write arbitrary recordsdata to the file system due to privileged entry to the machine. Analogously to the consumer software, we sandboxed the execution surroundings on Spark executors with the intention to securely run Python and Scala UDFs. We additionally isolate the egress community visitors from the remainder of the system. Lastly, for customers to have the ability to use their libraries in UDFs, we securely replicate the consumer surroundings into the UDF sandboxes. Consequently, UDFs on shared clusters run in full isolation, and Lakeguard can be used for Python UDFs within the Databricks SQL information warehouse.

Save time and price at this time with Unity Catalog and Shared Clusters

We invite you to strive Shared Clusters at this time to collaborate along with your group and save price. Lakeguard is an integral part of Unity catalog and has been enabled for all prospects utilizing Shared Clusters, Delta Stay Tables (DLT) and Databricks SQL with Unity Catalog.