Probably the most inspiring a part of my function is touring across the globe, assembly our prospects from each sector and seeing, studying, collaborating with them as they construct GenAI options and put them into manufacturing. It’s thrilling to see our prospects actively advancing their GenAI journey. However many out there usually are not, and the hole is rising.

AI leaders are rightfully struggling to maneuver past the prototype and experimental stage, it’s our mission to alter that. At DataRobot, we name this the “confidence hole”. It’s the belief, security and accuracy and considerations surrounding GenAI which are holding groups again, and we’re dedicated to addressing it. And, it’s the core focus of our Spring ’24 launch and its groundbreaking options.

This launch focuses on the three most vital hurdles to unlocking worth with GenAI.

First, we’re bringing you enterprise-grade open-source LLM help, and a collection of analysis and testing metrics, that can assist you and your groups confidently create production-grade AI purposes. That will help you safeguard your fame and stop threat from AI apps operating amok, we’re bringing you real-time intervention and moderation for all of your GenAI purposes. And at last, to make sure your whole fleet of AI belongings keep in peak efficiency, we’re bringing you a first-of-its-kind multi-cloud and hybrid AI Observability that can assist you totally govern and optimize your entire AI investments.

Confidently Create Manufacturing-Grade AI Purposes

There’s loads of discuss fine-tuning an LLM. However, we now have seen that the actual worth lies in fine-tuning your generative AI software. It’s difficult, although. Not like predictive AI, which has hundreds of simply accessible fashions and customary knowledge science metrics to benchmark and assess efficiency towards, generative AI hasn’t—till now.

Not like predictive AI, which has hundreds of simply accessible fashions and customary knowledge science metrics to benchmark and assess efficiency towards, generative AI hasn’t—till now.

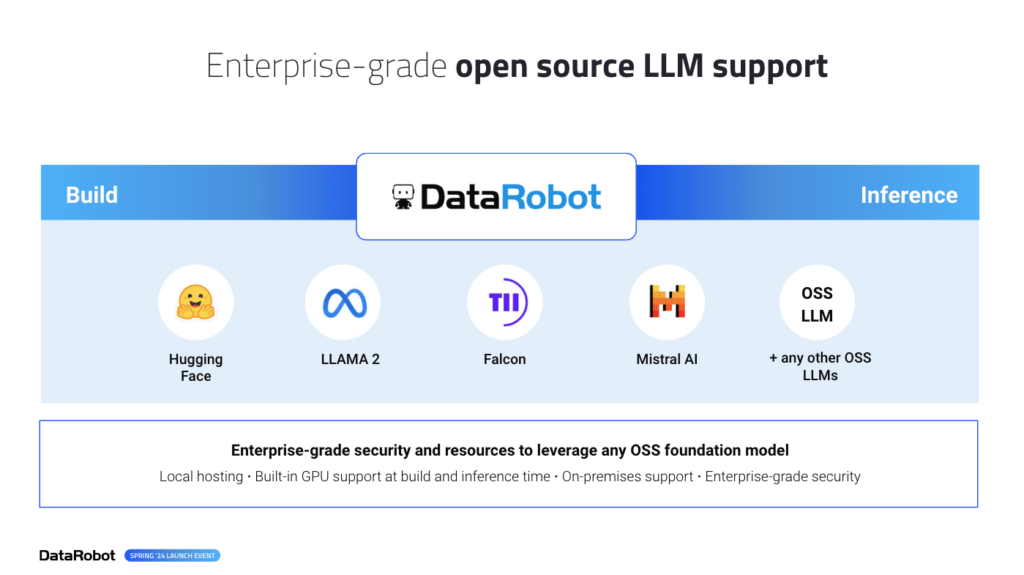

In our Spring ’24 launch, get enterprise-grade help for any open-source LLM. We’ve additionally launched a whole set of LLM analysis, testing, and metrics. Now, you may fine-tune your generative AI software expertise, making certain their reliability and effectiveness.

Enterprise-Grade Open Supply LLMs Internet hosting

Privateness, management, and suppleness stay vital for all organizations concerning LLMs.There was no straightforward reply for AI Leaders who’ve been caught with having to choose between vendor lock-in dangers utilizing main API-based LLMs that might turn into sub-optimal and costly within the instant future, determining the right way to get up and host your individual open supply LLM, or custom-building, internet hosting, and sustaining your individual LLM.

With our Spring Launch, you could have entry to the broadest number of LLMs, permitting you to decide on the one which aligns along with your safety necessities and use circumstances. Not solely do you could have ready-to-use entry to LLMs from main suppliers like Amazon, Google, and Microsoft, however you even have the flexibleness to host your individual {custom} LLMs. Moreover, our Spring ’24 Launch presents enterprise-level entry to open-source LLMs, additional increasing your choices.

We’ve made internet hosting and utilizing open-source foundational fashions like LLaMa, Falcon, Mistral, and Hugging Face straightforward with DataRobot’s built-in LLM safety and sources. We’ve eradicated the complicated and labor-intensive handbook DevOps integrations required and made it as straightforward as a drop-down choice.

LLM Analysis, Testing and Evaluation Metrics

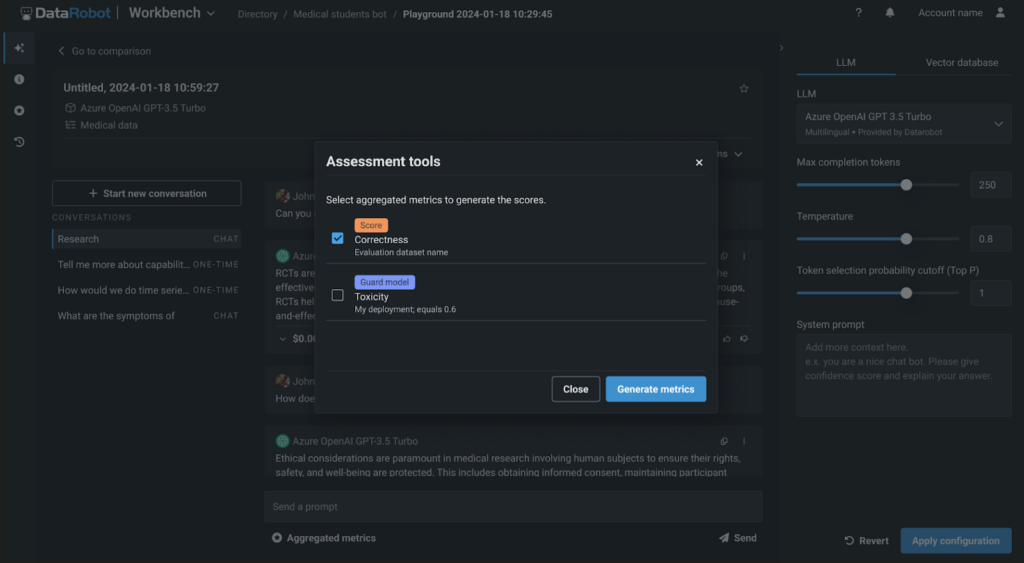

With DataRobot, you may freely select and experiment throughout LLMs. We additionally provide you with superior experimentation choices, corresponding to attempting varied chunking methods, embedding strategies, and vector databases. With our new LLM analysis, testing, and evaluation metrics, you and your groups now have a transparent method of validating the standard of your GenAI software and LLM efficiency throughout these experiments.

With our first-of-its-kind artificial knowledge era for prompt-and-answer analysis, you may shortly and effortlessly create hundreds of question-and-answer pairs. This allows you to simply see how properly your RAG experiment performs and stays true to your vector database.

We’re additionally supplying you with a whole set of analysis metrics. You’ll be able to benchmark, examine efficiency, and rank your RAG experiments based mostly on faithfulness, correctness, and different metrics to create high-quality and invaluable GenAI purposes.

And with DataRobot, it’s all the time about selection. You are able to do all of this as low code or in our totally hosted notebooks, which even have a wealthy set of latest codespace performance that eliminates infrastructure and useful resource administration and facilitates straightforward collaboration.

Observe and Intervene in Actual-Time

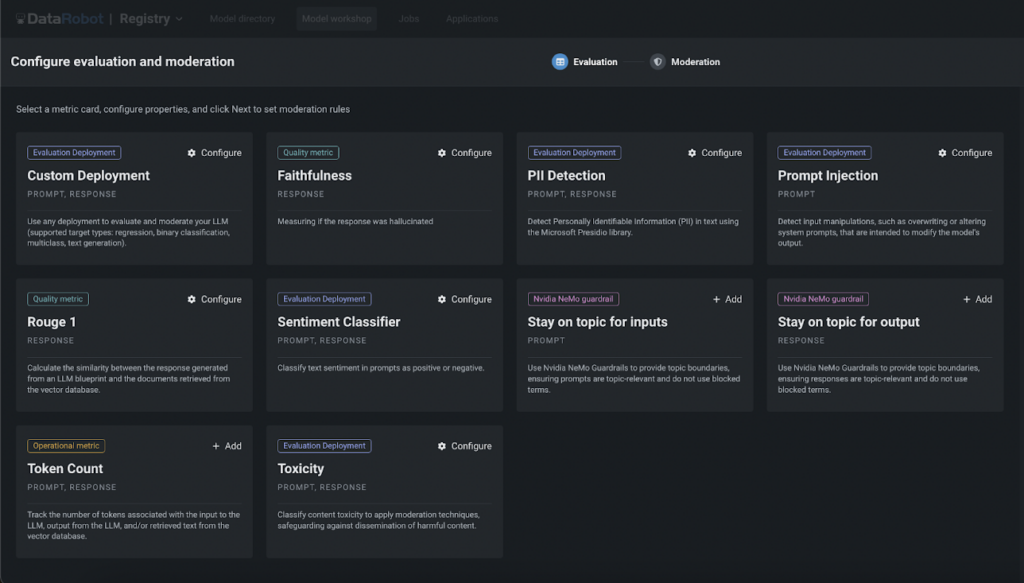

The most important concern I hear from AI leaders about generative AI is reputational threat. There are already loads of information articles about GenAI purposes exposing non-public knowledge and authorized courts holding firms accountable for the guarantees their GenAI purposes made. In our Spring ’24 Launch, we’ve addressed this difficulty head-on.

With our wealthy library of customizable guards, workflows, and notifications, you may construct a multi-layered protection to detect and stop surprising or undesirable behaviors throughout your whole fleet of GenAI purposes in actual time.

Our library of pre-built guards could be totally custom-made to stop immediate injections and toxicity, detect PII, mitigate hallucinations, and extra. Our moderation guards and real-time intervention could be utilized to your entire generative AI purposes – even these constructed exterior of DataRobot, supplying you with peace of thoughts that your AI belongings will carry out as meant.

Govern and Optimize Infrastructure Investments

Due to generative AI, the proliferation of latest AI instruments, initiatives, and groups engaged on them has elevated exponentially. I usually hear about “shadow GenAI” initiatives and the way AI leaders and IT groups battle to reign all of it in. They discover it difficult to get a complete view, compounded by complicated multi-cloud and hybrid environments. The dearth of AI observability opens organizations as much as AI misuse and safety dangers.

Cross-Surroundings AI Observability

We’re right here that can assist you thrive on this new regular the place AI exists in a number of environments and areas. With our Spring ’24 Launch, we’re bringing the first-of-its-kind, cross-environment AI observability – supplying you with unified safety, governance, and visibility throughout clouds and on-premise environments.

Your groups get to work within the instruments and methods they need; AI leaders get the unified governance, safety, and observability they should shield their organizations.

Our custom-made alerts and notification insurance policies combine with the instruments of your selection, from ITSM to Jira and Slack, that can assist you scale back time-to-detection (TTD) and time-to-resolution (TTR).

Insights and visuals assist your groups see, diagnose, and troubleshoot points along with your AI belongings – Hint prompts to the response and content material in your vector database with ease, See Generative AI subject drift with multi-language diagnostics, and extra.

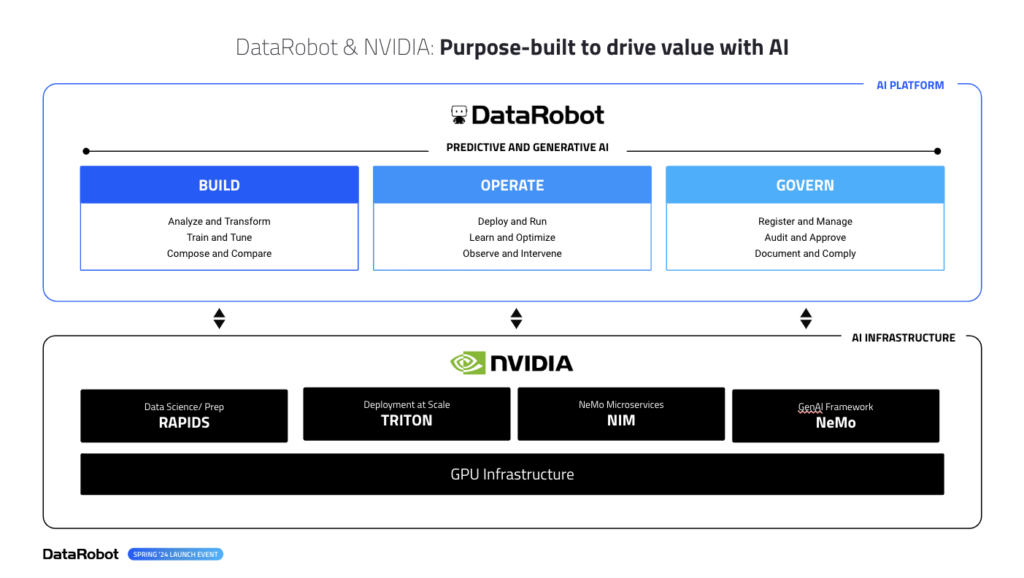

NVIDIA and GPU integrations

And, in case you’ve made investments in NVIDIA, we’re the first and solely AI platform to have deep integrations throughout the complete floor space of NVIDIA’s AI Infrastructure – from NIMS, to NeMoGuard fashions, to their new Triton inference companies, all prepared for you on the click on of a button. No extra managing separate installs or integration factors, DataRobot makes accessing your GPU investments straightforward.

Our Spring ’24 launch is filled with thrilling options, together with GenAI, predictive capabilities, and enhancements in time collection forecasting, multimodal modeling, and knowledge wrangling.

All of those new options can be found in cloud, on-premise, and hybrid environments. So, whether or not you’re an AI chief or a part of an AI staff, our Spring ’24 launch units the muse in your success.

That is just the start of the improvements we’re bringing you. We’ve a lot extra in retailer for you within the months forward. Keep tuned as we’re laborious at work on the subsequent wave of improvements.

Get Began

Be taught extra about DataRobot’s GenAI options and speed up your journey at present.

- Be a part of our Catalyst program to speed up your AI adoption and unlock the total potential of GenAI in your group.

- See DataRobot’s GenAI options in motion by scheduling a demo tailor-made to your particular wants and use circumstances.

- Discover our new options, and join along with your devoted DataRobot Utilized AI Knowledgeable to get began with them.

In regards to the writer

Venky Veeraraghavan leads the Product Staff at DataRobot, the place he drives the definition and supply of DataRobot’s AI platform. Venky has over twenty-five years of expertise as a product chief, with earlier roles at Microsoft and early-stage startup, Trilogy. Venky has spent over a decade constructing hyperscale BigData and AI platforms for a few of the largest and most complicated organizations on the planet. He lives, hikes and runs in Seattle, WA along with his household.