What’s a useless letter queue (DLQ)?

Cloudera SQL Stream builder offers non-technical customers the ability of a unified stream processing engine to allow them to combine, mixture, question, and analyze each streaming and batch knowledge sources in a single SQL interface. This permits enterprise customers to outline occasions of curiosity for which they should constantly monitor and reply rapidly. A useless letter queue (DLQ) can be utilized if there are deserialization errors when occasions are consumed from a Kafka subject. DLQ is beneficial to see if there are any failures resulting from invalid enter within the supply Kafka subject and makes it doable to document and debug issues associated to invalid inputs.

Making a DLQ

We’ll use the instance schema definition offered by SSB to display this function. The schema has two properties: “identify” and “temp” (for temperature) to seize sensor knowledge in JSON format. Step one is to create two Kafka matters: “sensor_data” and “sensor_data_dlq” which may be finished the next manner:

kafka-topics.sh --bootstrap-server <bootstrap-server> --create --topic sensor_data --replication-factor 1 --partitions 1 kafka-topics --bootstrap-server <bootstrap-server> --create --topic sensor_data_dlq --replication-factor 1 --partitions 1

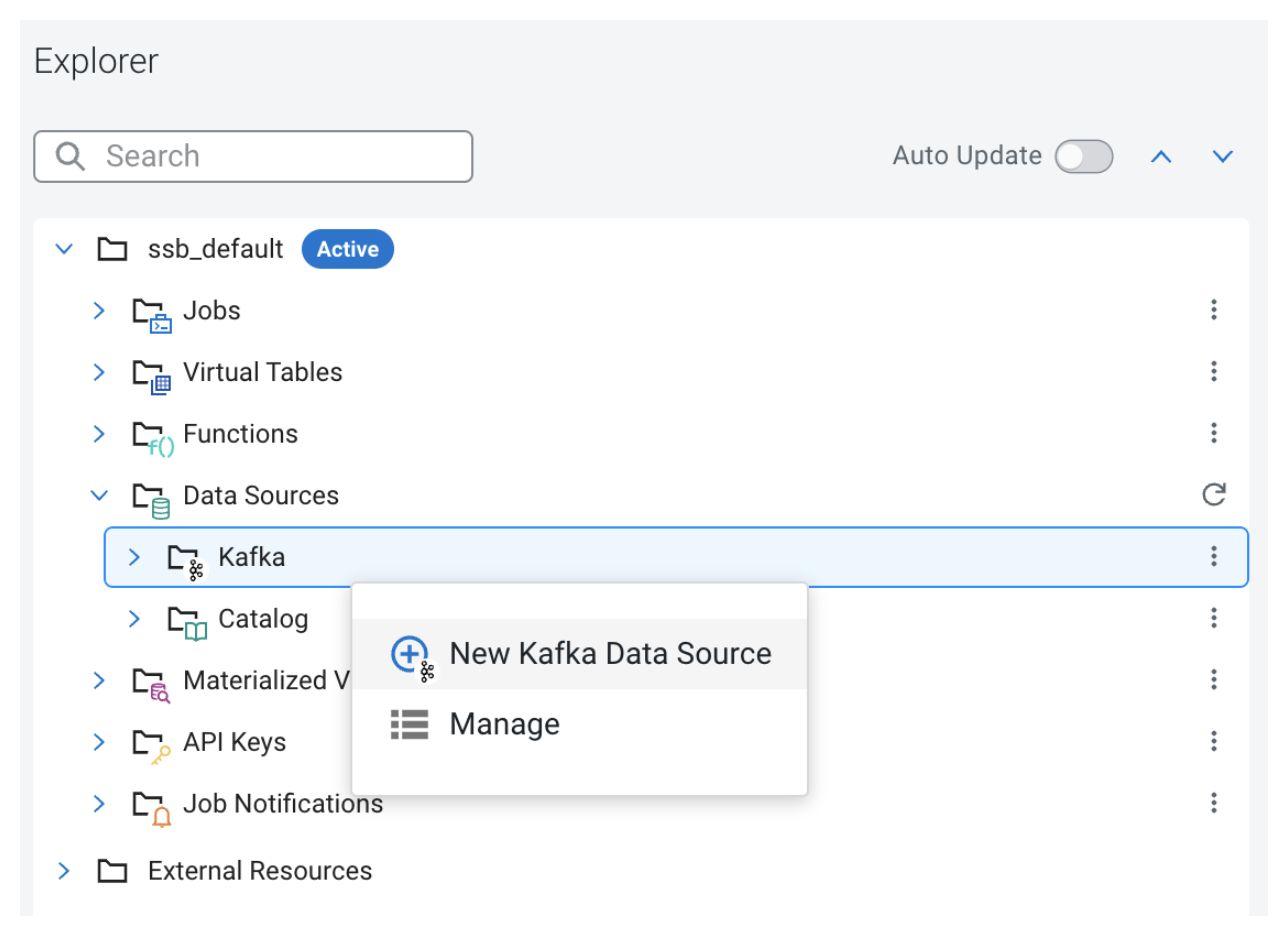

As soon as the Kafka matters are created, we will arrange a Kafka supply in SSB. SSB supplies a handy strategy to work with Kafka as we will do the entire setup utilizing the UI. In Mission Explorer, open the Information Sources folder. Proper clicking on “Kafka” brings up the context menu the place we will open the creation modal window.

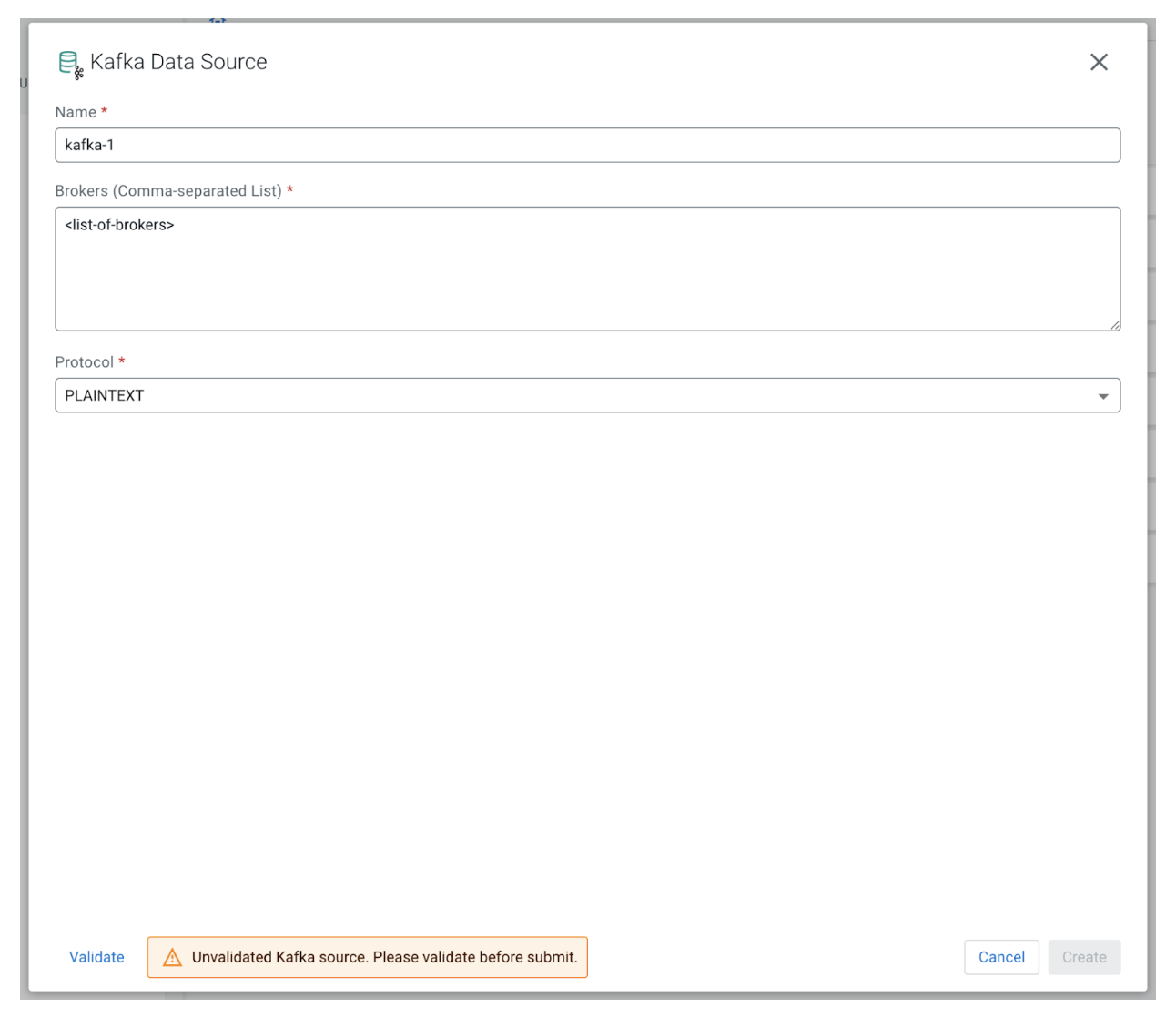

We have to present a singular identify for this new knowledge supply, the record of brokers, and the protocol in use:

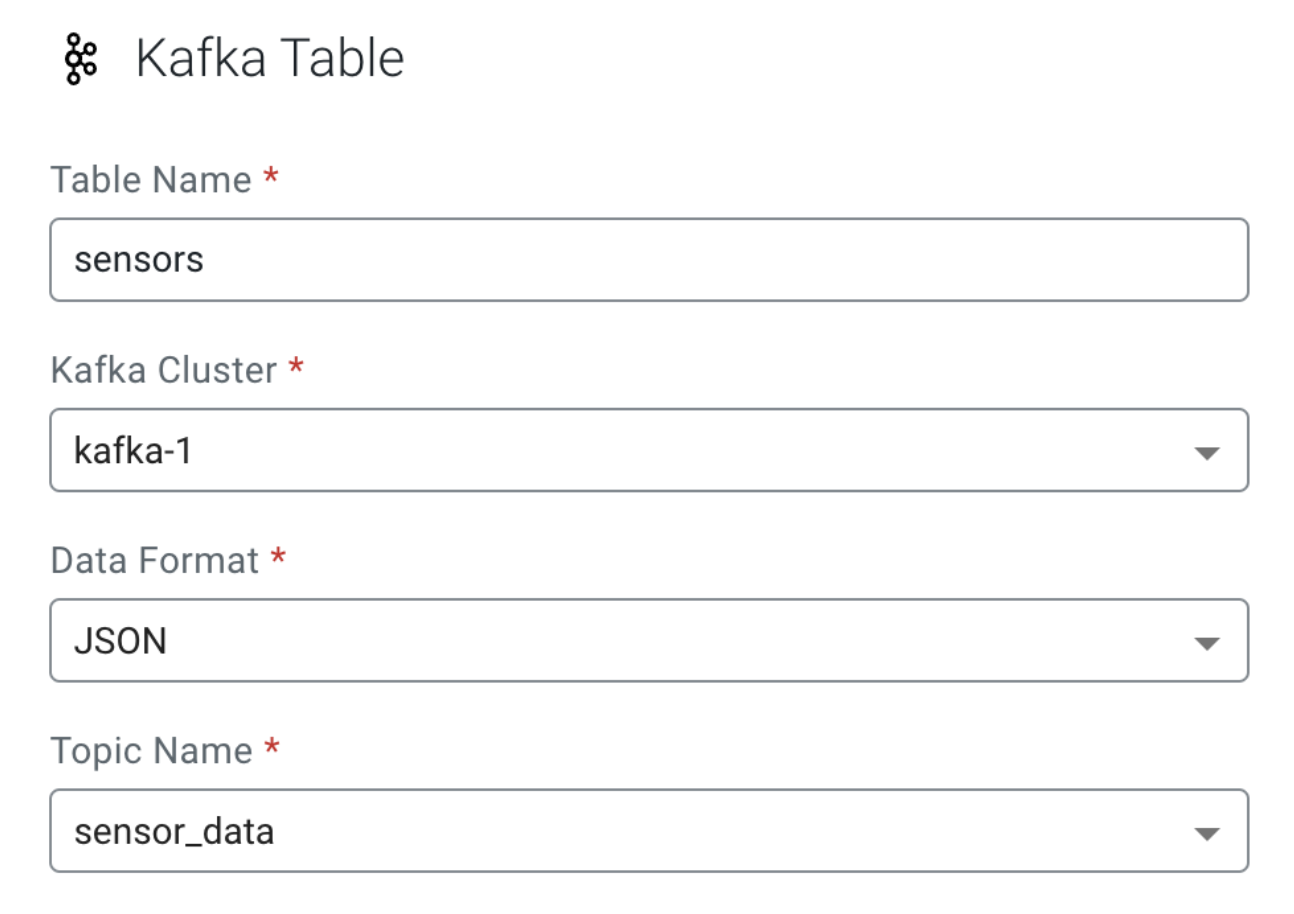

After the brand new Kafka supply is efficiently registered, the following step is to create a brand new digital desk. We are able to do this from the Mission Explorer by proper clicking “Digital Tables” and selecting “New Kafka Desk” from the context menu. Let’s fill out the shape with the next values:

- Desk Identify: Any distinctive identify; we are going to person “sensors” on this instance

- Kafka Cluster: Select the Kafka supply registered within the earlier step

- Information Format: JSON

- Matter Identify: “sensor_data” which we created earlier

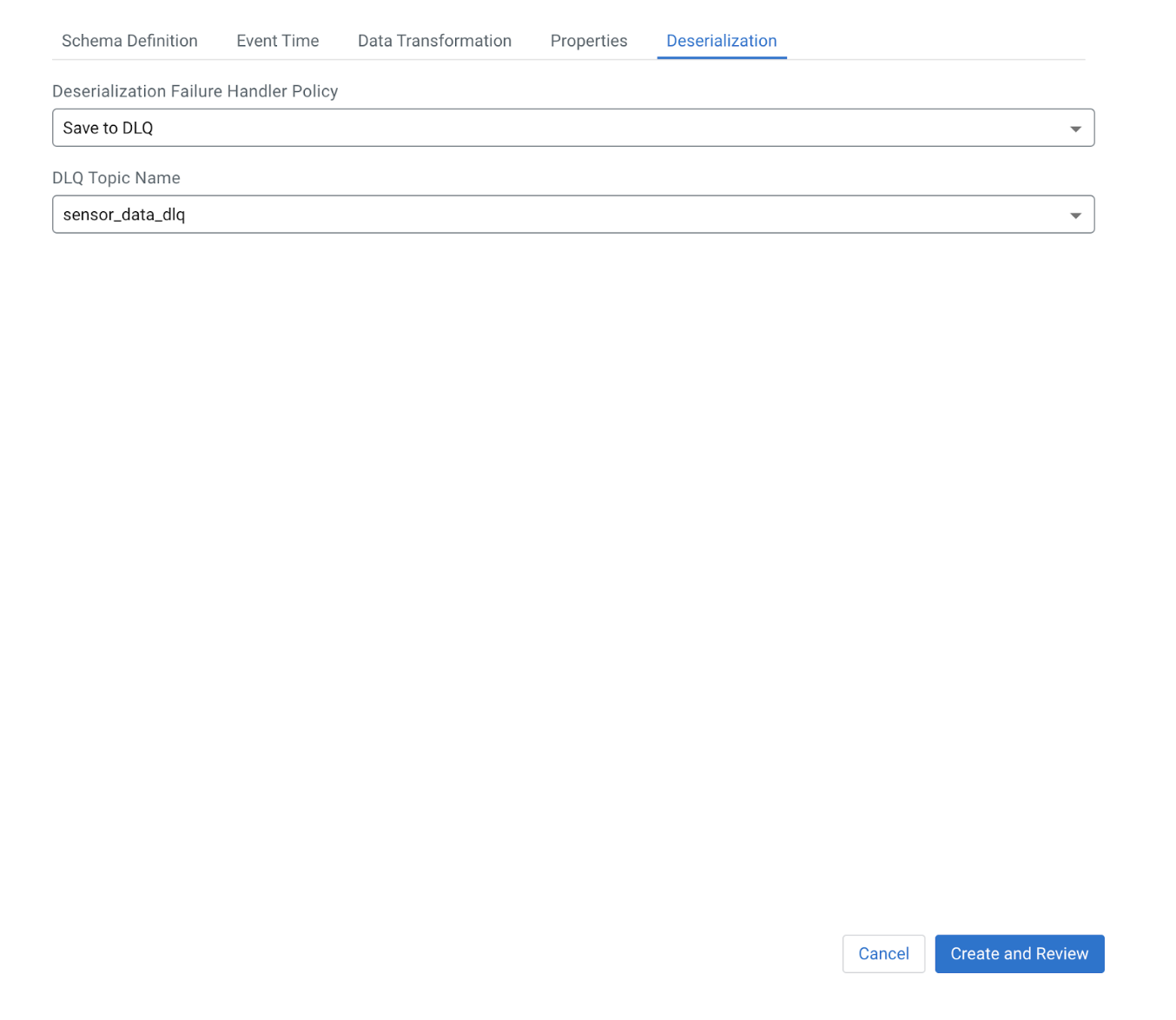

We are able to see underneath the “Schema Definition” tab that the instance offered has the 2 fields, “identify” and “temp,” as mentioned earlier. The final step is to arrange the DLQ performance, which we will do by going to the “Deserialization” tab. The “Deserialization Failure Handler Coverage” drop-down has the next choices:

- “Fail”: Let the job crash after which auto-restart setting dictates what occurs subsequent

- “Ignore”: Ignores the message that would not be deserialized, strikes to the following

- “Ignore and Log”: Similar as ignore however logs every time it encounters a deserialization failure

- “Save to DLQ”: Sends the invalid message to the desired Kafka subject

Let’s choose “Save to DLQ” and select the beforehand created “sensor_data_dlq” subject from the “DLQ Matter Identify” drop-down. We are able to click on “Create and Evaluation” to create the brand new digital desk.

Testing the DLQ

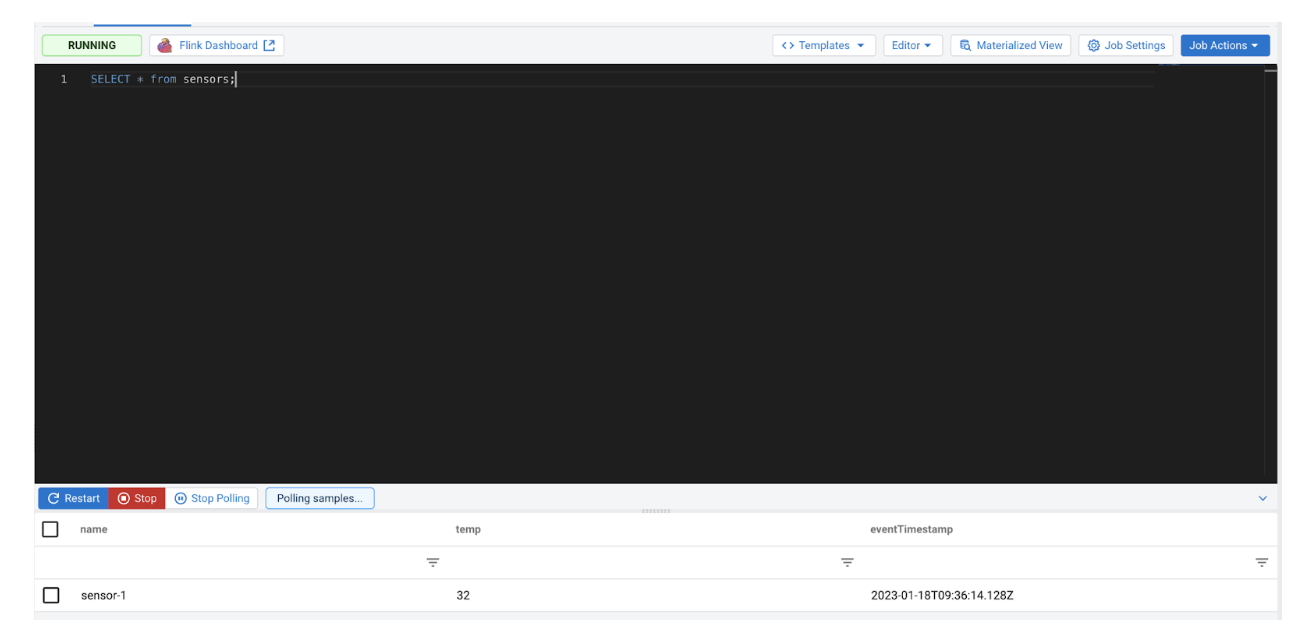

First, create a brand new SSB job from the Mission Explorer. We are able to run the next SQL question to eat the information from the Kafka subject:

SELECT * from sensors;

Within the subsequent step we are going to use the console producer and shopper command line instruments to work together with Kafka. Let’s ship a legitimate enter to the “sensor_data” subject and examine whether it is consumed by our working job.

kafka-console-producer.sh --broker-list <dealer> --topic sensor_data >{"identify":"sensor-1", "temp": 32}

Checking again on the SSB UI, we will see that the brand new message has been processed:

Now, ship an invalid enter to the supply Kafka subject:

kafka-console-producer.sh --broker-list <dealer> --topic sensor_data >invalid knowledge

We received’t see any new messages in SSB because the invalid enter can’t be deserialized. Let’s examine on the DLQ subject we arrange earlier to see if the invalid message was captured:

kafka-console-consumer.sh --bootstrap-server <server> --topic sensor_data_dlq --from-beginning invalid knowledge

The invalid enter is there which verifies that the DLQ performance is working accurately, permitting us to additional examine any deserialization error.

Conclusion

On this weblog, we coated the capabilities of the DLQ function in Flink and SSB. This function may be very helpful to gracefully deal with a failure in an information pipeline resulting from invalid knowledge. Utilizing this functionality, it is rather straightforward and fast to search out out if there are any unhealthy data within the pipeline and the place the foundation explanation for these unhealthy data are.

Anyone can check out SSB utilizing the Stream Processing Group Version (CSP-CE). CE makes growing stream processors straightforward, as it may be finished proper out of your desktop or another growth node. Analysts, knowledge scientists, and builders can now consider new options, develop SQL-based stream processors regionally utilizing SQL Stream Builder powered by Flink, and develop Kafka Customers/Producers and Kafka Join Connectors, all regionally earlier than transferring to manufacturing in CDP.