“In at this time’s quickly evolving digital panorama, we see a rising variety of providers and environments (by which these providers run) our prospects make the most of on Azure. Making certain the efficiency and safety of Azure means our groups are vigilant about common upkeep and updates to maintain tempo with buyer wants. Stability, reliability, and rolling well timed updates stay

“In at this time’s quickly evolving digital panorama, we see a rising variety of providers and environments (by which these providers run) our prospects make the most of on Azure. Making certain the efficiency and safety of Azure means our groups are vigilant about common upkeep and updates to maintain tempo with buyer wants. Stability, reliability, and rolling well timed updates stay our high precedence when testing and deploying modifications. In minimizing affect to prospects and providers, we should account for the multifaceted software program, {hardware}, and platform panorama. That is an instance of an optimization downside, an trade idea that revolves round discovering one of the best ways to allocate sources, handle workloads, and guarantee efficiency whereas conserving prices low and adhering to numerous constraints. Given the complexity and ever-changing nature of cloud environments, this activity is each vital and difficult.

I’ve requested Rohit Pandey, Principal Knowledge Scientist Supervisor, and Akshay Sathiya, Knowledge Scientist, from the Azure Core Insights Knowledge Science Staff to debate approaches to optimization issues in cloud computing and share a useful resource we’ve developed for patrons to make use of to unravel these issues in their very own environments.“—Mark Russinovich, CTO, Azure

Optimization issues in cloud computing

Optimization issues exist throughout the expertise trade. Software program merchandise of at this time are engineered to operate throughout a big selection of environments like web sites, purposes, and working methods. Equally, Azure should carry out nicely on a various set of servers and server configurations that span {hardware} fashions, digital machine (VM) sorts, and working methods throughout a manufacturing fleet. Beneath the constraints of time, computational sources, and rising complexity as we add extra providers, {hardware}, and VMs, it might not be attainable to succeed in an optimum resolution. For issues reminiscent of these, an optimization algorithm is used to determine a near-optimal resolution that makes use of an inexpensive period of time and sources. Utilizing an optimization downside we encounter in organising the surroundings for a software program and {hardware} testing platform, we’ll talk about the complexity of such issues and introduce a library we created to unravel these sorts of issues that may be utilized throughout domains.

Setting design and combinatorial testing

In case you had been to design an experiment for evaluating a brand new medicine, you’ll take a look at on a various demographic of customers to evaluate potential unfavorable results which will have an effect on a choose group of individuals. In cloud computing, we equally must design an experimentation platform that, ideally, can be consultant of all of the properties of Azure and would sufficiently take a look at each attainable configuration in manufacturing. In follow, that may make the take a look at matrix too massive, so now we have to focus on the necessary and dangerous ones. Moreover, simply as you would possibly keep away from taking two medicine that may negatively have an effect on each other, properties inside the cloud even have constraints that should be revered for profitable use in manufacturing. For instance, {hardware} one would possibly solely work with VM sorts one and two, however not three and 4. Lastly, prospects could have further constraints that we should take into account in our surroundings.

With all of the attainable mixtures, we should design an surroundings that may take a look at the necessary mixtures and that takes into consideration the assorted constraints. AzQualify is our platform for testing Azure inside applications the place we leverage managed experimentation to vet any modifications earlier than they roll out. In AzQualify, applications are A/B examined on a variety of configurations and mixtures of configurations to determine and mitigate potential points earlier than manufacturing deployment.

Whereas it could be very best to check the brand new medicine and accumulate knowledge on each attainable person and each attainable interplay with each medicine in each state of affairs, there may be not sufficient time or sources to have the ability to try this. We face the identical constrained optimization downside in cloud computing. This downside is an NP-hard downside.

NP-hard issues

An NP-hard, or Nondeterministic Polynomial Time laborious, downside is difficult to unravel and laborious to even confirm (if somebody gave you one of the best resolution). Utilizing the instance of a brand new medicine which may remedy a number of ailments, testing this medicine entails a sequence of extremely advanced and interconnected trials throughout completely different affected person teams, environments, and situations. Every trial’s consequence would possibly rely upon others, making it not solely laborious to conduct but additionally very difficult to confirm all of the interconnected outcomes. We’re not in a position to know if this medicine is one of the best nor verify if it’s the finest. In laptop science, it has not but been confirmed (and is taken into account unlikely) that one of the best options for NP-hard issues are effectively obtainable..

One other NP-hard downside we take into account in AzQualify is allocation of VMs throughout {hardware} to steadiness load. This entails assigning buyer VMs to bodily machines in a manner that maximizes useful resource utilization, minimizes response time, and avoids overloading any single bodily machine. To visualise the absolute best strategy, we use a property graph to characterize and resolve issues involving interconnected knowledge.

Property graph

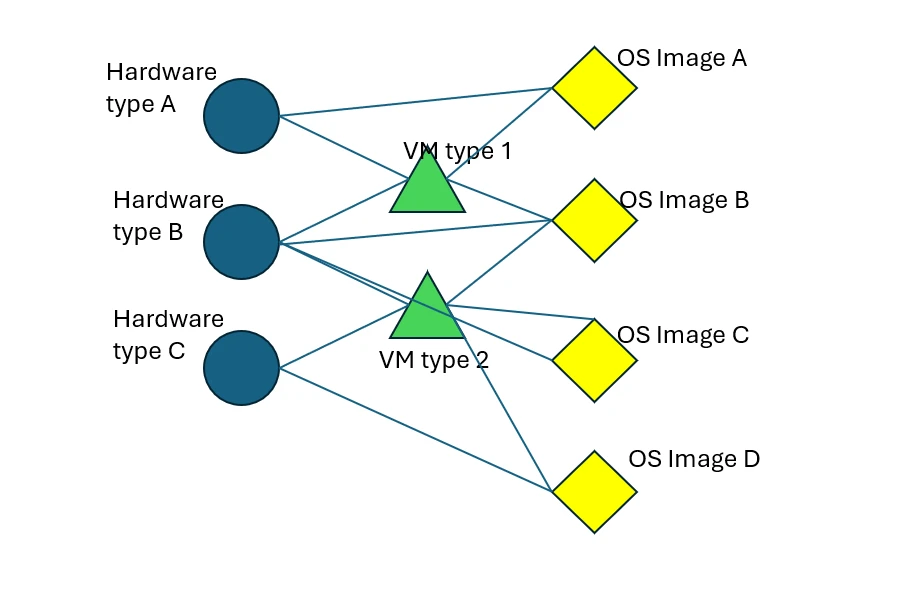

Property graph is an information construction generally utilized in graph databases to mannequin advanced relationships between entities. On this case, we will illustrate several types of properties with every sort utilizing its personal vertices, and Edges to characterize compatibility relationships. Every property is a vertex within the graph and two properties can have an edge between them if they’re appropriate with one another. This mannequin is very useful for visualizing constraints. Moreover, expressing constraints on this kind permits us to leverage present ideas and algorithms when fixing new optimization issues.

Beneath is an instance property graph consisting of three sorts of properties ({hardware} mannequin, VM sort, and working methods). Vertices characterize particular properties reminiscent of {hardware} fashions (A, B, and C, represented by blue circles), VM sorts (D and E, represented by inexperienced triangles), and OS pictures (F, G, H, and I, represented by yellow diamonds). Edges (black strains between vertices) characterize compatibility relationships. Vertices linked by an edge characterize properties appropriate with one another reminiscent of {hardware} mannequin C, VM sort E, and OS picture I.

Determine 1: An instance property graph exhibiting compatibility between {hardware} fashions (blue), VM sorts (inexperienced), and working methods (yellow)

In Azure, nodes are bodily positioned in datacenters throughout a number of areas. Azure prospects use VMs which run on nodes. A single node could host a number of VMs on the identical time, with every VM allotted a portion of the node’s computational sources (i.e. reminiscence or storage) and operating independently of the opposite VMs on the node. For a node to have a {hardware} mannequin, a VM sort to run, and an working system picture on that VM, all three should be appropriate with one another. On the graph, all of those can be linked. Therefore, legitimate node configurations are represented by cliques (every having one {hardware} mannequin, one VM sort, and one OS picture) within the graph.

An instance of the surroundings design downside we resolve in AzQualify is needing to cowl all of the {hardware} fashions, VM sorts, and working system pictures within the graph above. Let’s say we’d like {hardware} mannequin A to be 40% of the machines in our experiment, VM sort D to be 50% of the VMs operating on the machines, and OS picture F to be on 10% of all of the VMs. Lastly, we should use precisely 20 machines. Fixing easy methods to allocate the {hardware}, VM sorts, and working system pictures amongst these machines in order that the compatibility constraints in Determine one are happy and we get as shut as attainable to satisfying the opposite necessities is an instance of an issue the place no environment friendly algorithm exists.

Library of optimization algorithms

We now have developed some general-purpose code from learnings extracted from fixing NP-hard issues that we packaged within the optimizn library. Regardless that Python and R libraries exist for the algorithms we carried out, they’ve limitations that make them impractical to make use of on these sorts of advanced combinatorial, NP-hard issues. In Azure, we use this library to unravel numerous and dynamic sorts of surroundings design issues and implement routines that can be utilized on any sort of combinatorial optimization downside with consideration to extensibility throughout domains. The environment design system, which makes use of this library, has helped us cowl a greater variety of properties in testing, resulting in us catching 5 to 10 regressions monthly. By way of figuring out regressions, we will enhance Azure’s inside applications whereas modifications are nonetheless in pre-production and decrease potential platform stability and buyer affect as soon as modifications are broadly deployed.

Be taught extra in regards to the optimizn library

Understanding easy methods to strategy optimization issues is pivotal for organizations aiming to maximise effectivity, scale back prices, and enhance efficiency and reliability. Go to our optimizn library to unravel NP-hard issues in your compute surroundings. For these new to optimization or NP-hard issues, go to the README.md file of the library to see how one can interface with the assorted algorithms. As we proceed studying from the dynamic nature of cloud computing, we make common updates to basic algorithms in addition to publish new algorithms designed particularly to work on sure courses of NP-hard issues.

By addressing these challenges, organizations can obtain higher useful resource utilization, improve person expertise, and preserve a aggressive edge within the quickly evolving digital panorama. Investing in cloud optimization is not only about reducing prices; it’s about constructing a strong infrastructure that helps long-term enterprise objectives.